diff --git a/NEW/kubernetes资源对象Service.md b/NEW/kubernetes资源对象Service.md

new file mode 100644

index 0000000..0fdf08a

--- /dev/null

+++ b/NEW/kubernetes资源对象Service.md

@@ -0,0 +1,233 @@

+Kubernetes资源对象service

+

+著作:行癫 <盗版必究>

+

+------

+

+## 一:Service

+

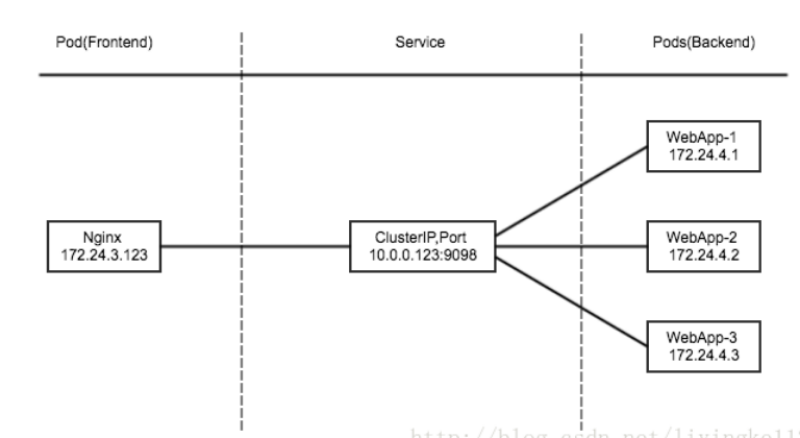

+ 将运行在一组 [Pods](https://v1-23.docs.kubernetes.io/docs/concepts/workloads/pods/pod-overview/) 上的应用程序公开为网络服务的抽象方法

+

+ 使用 Kubernetes,你无需修改应用程序即可使用不熟悉的服务发现机制;Kubernetes 为 Pods 提供自己的 IP 地址,并为一组 Pod 提供相同的 DNS 名, 并且可以在它们之间进行负载均衡

+

+ Kubernetes Service 定义了这样一种抽象:逻辑上的一组 Pod,一种可以访问它们的策略 —— 通常称为微服务

+

+ 举个例子,考虑一个图片处理后端,它运行了 3 个副本。这些副本是可互换的 —— 前端不需要关心它们调用了哪个后端副本。 然而组成这一组后端程序的 Pod 实际上可能会发生变化, 前端客户端不应该也没必要知道,而且也不需要跟踪这一组后端的状态

+

+#### 1.定义 Service

+

+例如,假定有一组 Pod,它们对外暴露了 9376 端口,同时还被打上 `app=MyApp` 标签:

+

+```shell

+apiVersion: v1

+kind: Service

+metadata:

+ name: my-service

+spec:

+ selector:

+ app: MyApp

+ ports:

+ - protocol: TCP

+ port: 80

+ targetPort: 9376

+```

+

+上述配置创建一个名称为 "my-service" 的 Service 对象,它会将请求代理到使用 TCP 端口 9376,并且具有标签 `"app=MyApp"` 的 Pod 上

+

+Kubernetes 为该服务分配一个 IP 地址(有时称为 "集群IP"),该 IP 地址由服务代理使用

+

+注意:

+

+ Service 能够将一个接收 `port` 映射到任意的 `targetPort`。 默认情况下,`targetPort` 将被设置为与 `port` 字段相同的值

+

+#### 2.多端口 Service

+

+ 对于某些服务,你需要公开多个端口。 Kubernetes 允许你在 Service 对象上配置多个端口定义

+

+```shell

+apiVersion: v1

+kind: Service

+metadata:

+ name: my-service

+spec:

+ selector:

+ app: MyApp

+ ports:

+ - name: http

+ protocol: TCP

+ port: 80

+ targetPort: 9376

+ - name: https

+ protocol: TCP

+ port: 443

+ targetPort: 9377

+```

+

+## 二:发布服务

+

+#### 1.服务类型

+

+ClusterIP

+

+NodePort

+

+LoadBalancer

+

+ExternalName

+

+#### 2.服务类型

+

+ 对一些应用的某些部分(如前端),可能希望将其暴露给 Kubernetes 集群外部 的 IP 地址

+

+ Kubernetes `ServiceTypes` 允许指定你所需要的 Service 类型,默认是 `ClusterIP`

+

+`Type` 的取值以及行为如下:

+

+ `ClusterIP`:通过集群的内部 IP 暴露服务,选择该值时服务只能够在集群内部访问。 这也是默认的 `ServiceType`

+

+

+

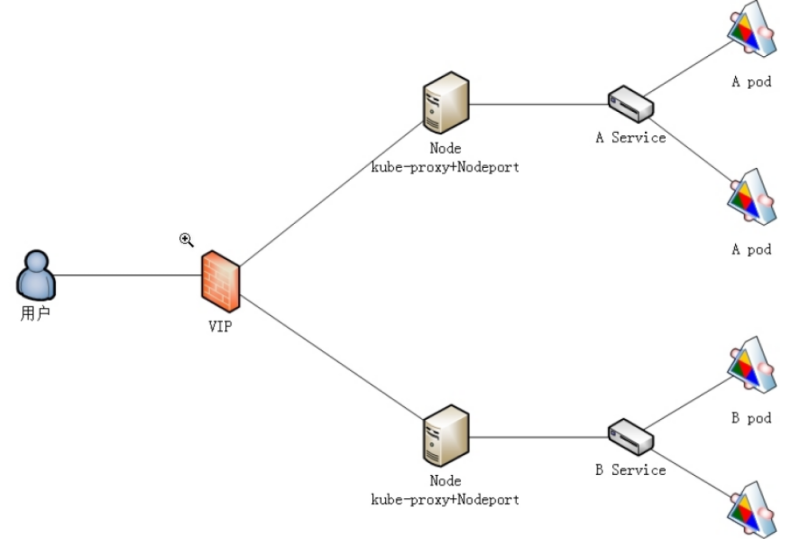

+ `NodePort`:通过每个节点上的 IP 和静态端口(`NodePort`)暴露服务。 `NodePort` 服务会路由到自动创建的 `ClusterIP` 服务。 通过请求 `<节点 IP>:<节点端口>`,你可以从集群的外部访问一个 `NodePort` 服务

+

+

+

+ [`LoadBalancer`](https://v1-23.docs.kubernetes.io/zh/docs/concepts/services-networking/service/#loadbalancer):使用云提供商的负载均衡器向外部暴露服务。 外部负载均衡器可以将流量路由到自动创建的 `NodePort` 服务和 `ClusterIP` 服务上

+

+ 你也可以使用Ingress来暴露自己的服务。 Ingress 不是一种服务类型,但它充当集群的入口点。 它可以将路由规则整合到一个资源中,因为它可以在同一IP地址下公开多个服务

+

+```shell

+[root@master nginx]# kubectl expose deployment nginx-deployment --port=80 --type=LoadBalancer

+```

+

+#### 3.NodePort

+

+ 如果你将 `type` 字段设置为 `NodePort`,则 Kubernetes 控制平面将在 `--service-node-port-range` 标志指定的范围内分配端口(默认值:30000-32767)

+

+例如:

+

+```shell

+apiVersion: v1

+kind: Service

+metadata:

+ name: my-service

+spec:

+ type: NodePort

+ selector:

+ app: MyApp

+ ports:

+ # 默认情况下,为了方便起见,`targetPort` 被设置为与 `port` 字段相同的值。

+ - port: 80

+ targetPort: 80

+ # 可选字段

+ # 默认情况下,为了方便起见,Kubernetes 控制平面会从某个范围内分配一个端口号(默认:30000-32767)

+ nodePort: 30007

+```

+

+#### 4.案例

+

+```shell

+apiVersion: apps/v1

+kind: Deployment

+metadata:

+ name: nginx-deployment

+spec:

+ replicas: 3

+ selector:

+ matchLabels:

+ app: nginx

+ template:

+ metadata:

+ labels:

+ app: nginx

+ spec:

+ containers:

+ - name: nginx-server

+ image: nginx:1.16

+ ports:

+ - containerPort: 80

+```

+

+```shell

+apiVersion: v1

+kind: Service

+metadata:

+ name: nginx-services

+ labels:

+ app: nginx

+spec:

+ type: NodePort

+ ports:

+ - port: 88

+ targetPort: 80

+ nodePort: 30010

+ selector:

+ app: nginx

+```

+

+#### 5.了解

+

+ `ExternalName` 是 `Kubernetes` 服务(`Service`)类型中的一种,它允许你将服务映射到一个外部的 DNS 名称,而不是选择器(`selector`)所定义的一组 Pod;这意味着当你在集群内部通过服务名称访问时,实际上是在访问外部指定的资源。

+

+使用场景

+

+ `ExternalName` 类型的服务非常适合以下几种情况:

+

+ 当你需要将内部服务指向外部系统或第三方 API

+

+ 当你希望服务名称和外部资源名称之间保持解耦,即使外部资源发生变化,只需要更新服务配置

+

+ 对于微服务架构中的跨团队合作,不同团队管理自己的服务,通过 `ExternalName` 来互相调用对方的服务

+

+配置方式

+

+ 创建一个 `ExternalName` 类型的服务非常简单,只需在服务定义文件中设置 `spec.type` 为 `ExternalName` 并提供 `spec.externalName` 字段来指明你要映射的外部域名

+

+```yaml

+apiVersion: v1

+kind: Service

+metadata:

+ name: external-service

+ namespace: default

+spec:

+ type: ExternalName

+ externalName: example.com

+```

+

+案例

+

+ 假设你正在管理一个电子商务平台,该平台由多个微服务组成,并且有一个专门处理支付的外部服务,这个支付服务是由第三方提供商托管的,其域名是 `payments.externalprovider.com`

+

+背景

+

+ 你的电商平台需要调用支付网关来完成交易过程。支付网关是一个由外部供应商提供的服务,不在你的 `Kubernetes`集群内运行,但是你的应用程序代码需要能够像调用内部服务一样方便地访问它

+

+使用 `ExternalName` 服务

+

+ 为了简化与外部支付服务的交互,你可以创建一个名为 payment-gateway 的 ExternalName 类型的服务,这样所有的内部服务就可以通过 payment-gateway.default.svc.cluster.local(假设在默认命名空间中)来访问外部的支付服务,而不需要直接硬编码外部域名

+

+```yaml

+apiVersion: v1

+kind: Service

+metadata:

+ name: payment-gateway

+ namespace: default

+spec:

+ type: ExternalName

+ externalName: payments.externalprovider.com

+```

+

+应用程序代码调整

+

+ 在你的应用程序代码中,你只需要配置服务名称为 payment-gateway 或者根据集群内的 DNS 解析规则使用完整的 FQDN (Fully Qualified Domain Name) payment-gateway.default.svc.cluster.local 来发起请求。比如,在 Java Spring Boot 应用中,你可以设置 REST 客户端的基础 URL:

+

+```java

+@Bean

+public RestTemplate restTemplate(RestTemplateBuilder builder) {

+ // 注意这里使用的是服务名称,而不是直接使用外部域名

+ return builder.rootUri("http://payment-gateway").build();

+}

+```

+

+总结

+

+ 通过这种方式,`ExternalName` 服务帮助你在`Kubernetes` 环境中优雅地整合了外部依赖,同时保持了良好的抽象层次和灵活性

diff --git a/NEW/kubernetes资源对象Volumes.md b/NEW/kubernetes资源对象Volumes.md

new file mode 100644

index 0000000..64ac364

--- /dev/null

+++ b/NEW/kubernetes资源对象Volumes.md

@@ -0,0 +1,141 @@

+Kubernetes资源对象Volumes

+

+著作:行癫 <盗版必究>

+

+------

+

+## 一:Volumes

+

+ Container 中的文件在磁盘上是临时存放的,这给 Container 中运行的较重要的应用 程序带来一些问题。问题之一是当容器崩溃时文件丢失。kubelet 会重新启动容器, 但容器会以干净的状态重启。 第二个问题会在同一 Pod中运行多个容器并共享文件时出现。 Kubernetes 卷(Volume) 这一抽象概念能够解决这两个问题。

+

+ Docker 也有 卷(Volume) 的概念,但对它只有少量且松散的管理。 Docker 卷是磁盘上或者另外一个容器内的一个目录。 Docker 提供卷驱动程序,但是其功能非常有限。

+

+ Kubernetes 支持很多类型的卷。 Pod 可以同时使用任意数目的卷类型。 临时卷类型的生命周期与 Pod 相同,但持久卷可以比 Pod 的存活期长。 因此,卷的存在时间会超出 Pod 中运行的所有容器,并且在容器重新启动时数据也会得到保留。 当 Pod 不再存在时,卷也将不再存在。

+

+ 卷的核心是包含一些数据的一个目录,Pod 中的容器可以访问该目录。 所采用的特定的卷类型将决定该目录如何形成的、使用何种介质保存数据以及目录中存放的内容。

+

+ 使用卷时, 在 .spec.volumes字段中设置为 Pod 提供的卷,并在.spec.containers[*].volumeMounts字段中声明卷在容器中的挂载位置。

+

+#### 1.cephfs

+

+ cephfs卷允许你将现存的 CephFS 卷挂载到 Pod 中。 不像emptyDir那样会在 Pod 被删除的同时也会被删除,cephfs卷的内容在 Pod 被删除 时会被保留,只是卷被卸载了。这意味着 cephfs 卷可以被预先填充数据,且这些数据可以在 Pod 之间共享。同一cephfs卷可同时被多个写者挂载。

+

+详细使用官方链接:

+

+```shell

+https://github.com/kubernetes/examples/tree/master/volumes/cephfs/

+```

+

+#### 2.hostPath

+

+ hostPath卷能将主机节点文件系统上的文件或目录挂载到你的 Pod 中。 虽然这不是大多数 Pod 需要的,但是它为一些应用程序提供了强大的逃生舱。

+

+| **取值** | **行为** |

+| :---------------: | :----------------------------------------------------------: |

+| | 空字符串(默认)用于向后兼容,这意味着在安装 hostPath 卷之前不会执行任何检查。 |

+| DirectoryOrCreate | 如果在给定路径上什么都不存在,那么将根据需要创建空目录,权限设置为 0755,具有与 kubelet 相同的组和属主信息。 |

+| Directory | 在给定路径上必须存在的目录。 |

+| FileOrCreate | 如果在给定路径上什么都不存在,那么将在那里根据需要创建空文件,权限设置为 0644,具有与 kubelet 相同的组和所有权。 |

+| File | 在给定路径上必须存在的文件。 |

+| Socket | 在给定路径上必须存在的 UNIX 套接字。 |

+| CharDevice | 在给定路径上必须存在的字符设备。 |

+| BlockDevice | 在给定路径上必须存在的块设备。 |

+

+hostPath 配置示例:

+

+```shell

+apiVersion: v1

+kind: Pod

+metadata:

+ name: test-pd

+spec:

+ containers:

+ - image: k8s.gcr.io/test-webserver

+ name: test-container

+ volumeMounts:

+ - mountPath: /test-pd

+ name: test-volume

+ volumes:

+ - name: test-volume

+ hostPath:

+ # 宿主上目录位置

+ path: /data

+ # 此字段为可选

+ type: Directory

+```

+

+案例:

+

+```shell

+apiVersion: apps/v1

+kind: Deployment

+metadata:

+ name: deploy-tomcat-1

+ labels:

+ app: tomcat-1

+

+spec:

+ replicas: 2

+ selector:

+ matchLabels:

+ app: tomcat-1

+ template:

+ metadata:

+ labels:

+ app: tomcat-1

+ spec:

+ containers:

+ - name: tomcat-1

+ image: daocloud.io/library/tomcat:8-jdk8

+ imagePullPolicy: IfNotPresent

+ ports:

+ - containerPort: 8080

+ volumeMounts:

+ - mountPath: /usr/local/tomcat/webapps

+ name: xingdian

+ volumes:

+ - name: xingdian

+ hostPath:

+ path: /opt/apps/web

+ type: Directory

+---

+apiVersion: v1

+kind: Service

+metadata:

+ name: tomcat-service-1

+ labels:

+ app: tomcat-1

+spec:

+ type: NodePort

+ ports:

+ - port: 888

+ targetPort: 8080

+ nodePort: 30021

+ selector:

+ app: tomcat-1

+```

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

diff --git a/NEW/基于kubeadm部署kubernetes集群.md b/NEW/基于kubeadm部署kubernetes集群.md

new file mode 100644

index 0000000..cc91289

--- /dev/null

+++ b/NEW/基于kubeadm部署kubernetes集群.md

@@ -0,0 +1,217 @@

+基于kubeadm部署kubernetes集群

+

+著作:行癫 <盗版必究>

+

+------

+

+## 一:环境准备

+

+三台服务器,一台master,两台node,master节点必须是2核cpu

+

+| 节点名称 | IP地址 |

+| :------: | :--------: |

+| master | 10.0.0.220 |

+| node-1 | 10.0.0.221 |

+| node-2 | 10.0.0.222 |

+| node-3 | 10.0.0.223 |

+

+#### 1.所有服务器关闭防火墙和selinux

+

+```shell

+[root@localhost ~]# systemctl stop firewalld

+[root@localhost ~]# systemctl disable firewalld

+[root@localhost ~]# setenforce 0

+[root@localhost ~]# sed -i '/^SELINUX=/c SELINUX=disabled/' /etc/selinux/config

+[root@localhost ~]# swapoff -a 临时关闭

+[root@localhost ~]# sed -i 's/.*swap.*/#&/' /etc/fstab 永久关闭

+注意:

+ 关闭所有服务器的交换分区

+ 所有节点操作

+```

+

+#### 2.保证yum仓库可用

+

+```shell

+[root@localhost ~]# yum clean all

+[root@localhost ~]# yum makecache fast

+注意:

+ 使用国内yum源

+ 所有节点操作

+```

+

+#### 3.修改主机名

+

+```shell

+[root@localhost ~]# hostnamectl set-hostname master

+[root@localhost ~]# hostnamectl set-hostname node-1

+[root@localhost ~]# hostnamectl set-hostname node-2

+[root@localhost ~]# hostnamectl set-hostname node-3

+注意:

+ 所有节点操作

+```

+

+#### 4.添加本地解析

+

+```shell

+[root@master ~]# cat >> /etc/hosts <> /etc/yum.repos.d/kubernetes.repo </etc/sysconfig/kubelet<> /etc/sysctl.conf < 4m45s v1.23.1

+node-2 Ready 4m40s v1.23.1

+node-3 Ready 4m46s v1.23.1

+```

+

+

+

+

+

+

+

+

+

diff --git a/NEW/基于kubeadm部署kubernetes集群1-25版本.md b/NEW/基于kubeadm部署kubernetes集群1-25版本.md

new file mode 100644

index 0000000..3a08218

--- /dev/null

+++ b/NEW/基于kubeadm部署kubernetes集群1-25版本.md

@@ -0,0 +1,953 @@

+基于kubeadm部署kubernetes集群

+

+著作:行癫 <盗版必究>

+

+------

+

+## 一:环境准备

+

+三台服务器,一台master,两台node,master节点必须是2核cpu

+

+| 节点名称 | IP地址 |

+| :------: | :--------: |

+| master | 10.0.0.220 |

+| node-1 | 10.0.0.221 |

+| node-2 | 10.0.0.222 |

+| node-3 | 10.0.0.223 |

+

+#### 1.所有服务器关闭防火墙和selinux

+

+```shell

+[root@localhost ~]# systemctl stop firewalld

+[root@localhost ~]# systemctl disable firewalld

+[root@localhost ~]# setenforce 0

+[root@localhost ~]# sed -i '/^SELINUX=/c SELINUX=disabled/' /etc/selinux/config

+[root@localhost ~]# swapoff -a 临时关闭

+[root@localhost ~]# sed -i 's/.*swap.*/#&/' /etc/fstab 永久关闭

+注意:

+ 关闭所有服务器的交换分区

+ 所有节点操作

+```

+

+#### 2.保证yum仓库可用

+

+```shell

+[root@localhost ~]# yum clean all

+[root@localhost ~]# yum makecache fast

+注意:

+ 使用国内yum源

+ 所有节点操作

+```

+

+#### 3.修改主机名

+

+```shell

+[root@localhost ~]# hostnamectl set-hostname master

+[root@localhost ~]# hostnamectl set-hostname node-1

+[root@localhost ~]# hostnamectl set-hostname node-2

+[root@localhost ~]# hostnamectl set-hostname node-3

+注意:

+ 所有节点操作

+```

+

+#### 4.添加本地解析

+

+```shell

+[root@master ~]# cat >> /etc/hosts <> /etc/yum.repos.d/kubernetes.repo <> /etc/yum.repos.d/kubernetes.repo <> /etc/sysctl.conf < 2m7s v1.25.0

+node-2 Ready 50s v1.25.0

+node-3 Ready 110s v1.25.0

+```

+

+## 三:部署Dashboard

+

+#### 1.kube-proxy 开启 ipvs

+

+```shell

+[root@master ~]# kubectl get configmap kube-proxy -n kube-system -o yaml > kube-proxy-configmap.yaml

+[root@master ~]# sed -i 's/mode: ""/mode: "ipvs"/' kube-proxy-configmap.yaml

+[root@master ~]# kubectl apply -f kube-proxy-configmap.yaml

+[root@master ~]# rm -f kube-proxy-configmap.yaml

+[root@master ~]# kubectl get pod -n kube-system | grep kube-proxy | awk '{system("kubectl delete pod "$1" -n kube-system")}'

+```

+

+#### 2.Dashboard安装脚本

+

+```shell

+[root@master ~]# cat dashboard.yaml

+# Copyright 2017 The Kubernetes Authors.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+

+apiVersion: v1

+kind: Namespace

+metadata:

+ name: kubernetes-dashboard

+

+---

+

+apiVersion: v1

+kind: ServiceAccount

+metadata:

+ labels:

+ k8s-app: kubernetes-dashboard

+ name: kubernetes-dashboard

+ namespace: kubernetes-dashboard

+

+---

+

+kind: Service

+apiVersion: v1

+metadata:

+ labels:

+ k8s-app: kubernetes-dashboard

+ name: kubernetes-dashboard

+ namespace: kubernetes-dashboard

+spec:

+ type: NodePort

+ ports:

+ - port: 443

+ targetPort: 8443

+ selector:

+ k8s-app: kubernetes-dashboard

+

+---

+

+apiVersion: v1

+kind: Secret

+metadata:

+ labels:

+ k8s-app: kubernetes-dashboard

+ name: kubernetes-dashboard-certs

+ namespace: kubernetes-dashboard

+type: Opaque

+

+---

+

+apiVersion: v1

+kind: Secret

+metadata:

+ labels:

+ k8s-app: kubernetes-dashboard

+ name: kubernetes-dashboard-csrf

+ namespace: kubernetes-dashboard

+type: Opaque

+data:

+ csrf: ""

+

+---

+

+apiVersion: v1

+kind: Secret

+metadata:

+ labels:

+ k8s-app: kubernetes-dashboard

+ name: kubernetes-dashboard-key-holder

+ namespace: kubernetes-dashboard

+type: Opaque

+

+---

+

+kind: ConfigMap

+apiVersion: v1

+metadata:

+ labels:

+ k8s-app: kubernetes-dashboard

+ name: kubernetes-dashboard-settings

+ namespace: kubernetes-dashboard

+

+---

+

+kind: Role

+apiVersion: rbac.authorization.k8s.io/v1

+metadata:

+ labels:

+ k8s-app: kubernetes-dashboard

+ name: kubernetes-dashboard

+ namespace: kubernetes-dashboard

+rules:

+ # Allow Dashboard to get, update and delete Dashboard exclusive secrets.

+ - apiGroups: [""]

+ resources: ["secrets"]

+ resourceNames: ["kubernetes-dashboard-key-holder", "kubernetes-dashboard-certs", "kubernetes-dashboard-csrf"]

+ verbs: ["get", "update", "delete"]

+ # Allow Dashboard to get and update 'kubernetes-dashboard-settings' config map.

+ - apiGroups: [""]

+ resources: ["configmaps"]

+ resourceNames: ["kubernetes-dashboard-settings"]

+ verbs: ["get", "update"]

+ # Allow Dashboard to get metrics.

+ - apiGroups: [""]

+ resources: ["services"]

+ resourceNames: ["heapster", "dashboard-metrics-scraper"]

+ verbs: ["proxy"]

+ - apiGroups: [""]

+ resources: ["services/proxy"]

+ resourceNames: ["heapster", "http:heapster:", "https:heapster:", "dashboard-metrics-scraper", "http:dashboard-metrics-scraper"]

+ verbs: ["get"]

+

+---

+

+kind: ClusterRole

+apiVersion: rbac.authorization.k8s.io/v1

+metadata:

+ labels:

+ k8s-app: kubernetes-dashboard

+ name: kubernetes-dashboard

+rules:

+ # Allow Metrics Scraper to get metrics from the Metrics server

+ - apiGroups: ["metrics.k8s.io"]

+ resources: ["pods", "nodes"]

+ verbs: ["get", "list", "watch"]

+

+---

+

+apiVersion: rbac.authorization.k8s.io/v1

+kind: RoleBinding

+metadata:

+ labels:

+ k8s-app: kubernetes-dashboard

+ name: kubernetes-dashboard

+ namespace: kubernetes-dashboard

+roleRef:

+ apiGroup: rbac.authorization.k8s.io

+ kind: Role

+ name: kubernetes-dashboard

+subjects:

+ - kind: ServiceAccount

+ name: kubernetes-dashboard

+ namespace: kubernetes-dashboard

+

+---

+

+apiVersion: rbac.authorization.k8s.io/v1

+kind: ClusterRoleBinding

+metadata:

+ name: kubernetes-dashboard

+roleRef:

+ apiGroup: rbac.authorization.k8s.io

+ kind: ClusterRole

+ name: kubernetes-dashboard

+subjects:

+ - kind: ServiceAccount

+ name: kubernetes-dashboard

+ namespace: kubernetes-dashboard

+

+---

+

+kind: Deployment

+apiVersion: apps/v1

+metadata:

+ labels:

+ k8s-app: kubernetes-dashboard

+ name: kubernetes-dashboard

+ namespace: kubernetes-dashboard

+spec:

+ replicas: 1

+ revisionHistoryLimit: 10

+ selector:

+ matchLabels:

+ k8s-app: kubernetes-dashboard

+ template:

+ metadata:

+ labels:

+ k8s-app: kubernetes-dashboard

+ spec:

+ securityContext:

+ seccompProfile:

+ type: RuntimeDefault

+ containers:

+ - name: kubernetes-dashboard

+ image: kubernetesui/dashboard:v2.6.1

+ imagePullPolicy: Always

+ ports:

+ - containerPort: 8443

+ protocol: TCP

+ args:

+ - --auto-generate-certificates

+ - --namespace=kubernetes-dashboard

+ # Uncomment the following line to manually specify Kubernetes API server Host

+ # If not specified, Dashboard will attempt to auto discover the API server and connect

+ # to it. Uncomment only if the default does not work.

+ # - --apiserver-host=http://my-address:port

+ volumeMounts:

+ - name: kubernetes-dashboard-certs

+ mountPath: /certs

+ # Create on-disk volume to store exec logs

+ - mountPath: /tmp

+ name: tmp-volume

+ livenessProbe:

+ httpGet:

+ scheme: HTTPS

+ path: /

+ port: 8443

+ initialDelaySeconds: 30

+ timeoutSeconds: 30

+ securityContext:

+ allowPrivilegeEscalation: false

+ readOnlyRootFilesystem: true

+ runAsUser: 1001

+ runAsGroup: 2001

+ volumes:

+ - name: kubernetes-dashboard-certs

+ secret:

+ secretName: kubernetes-dashboard-certs

+ - name: tmp-volume

+ emptyDir: {}

+ serviceAccountName: kubernetes-dashboard

+ nodeSelector:

+ "kubernetes.io/os": linux

+ # Comment the following tolerations if Dashboard must not be deployed on master

+ tolerations:

+ - key: node-role.kubernetes.io/master

+ effect: NoSchedule

+

+---

+

+kind: Service

+apiVersion: v1

+metadata:

+ labels:

+ k8s-app: dashboard-metrics-scraper

+ name: dashboard-metrics-scraper

+ namespace: kubernetes-dashboard

+spec:

+ ports:

+ - port: 8000

+ targetPort: 8000

+ selector:

+ k8s-app: dashboard-metrics-scraper

+

+---

+

+kind: Deployment

+apiVersion: apps/v1

+metadata:

+ labels:

+ k8s-app: dashboard-metrics-scraper

+ name: dashboard-metrics-scraper

+ namespace: kubernetes-dashboard

+spec:

+ replicas: 1

+ revisionHistoryLimit: 10

+ selector:

+ matchLabels:

+ k8s-app: dashboard-metrics-scraper

+ template:

+ metadata:

+ labels:

+ k8s-app: dashboard-metrics-scraper

+ spec:

+ securityContext:

+ seccompProfile:

+ type: RuntimeDefault

+ containers:

+ - name: dashboard-metrics-scraper

+ image: kubernetesui/metrics-scraper:v1.0.8

+ ports:

+ - containerPort: 8000

+ protocol: TCP

+ livenessProbe:

+ httpGet:

+ scheme: HTTP

+ path: /

+ port: 8000

+ initialDelaySeconds: 30

+ timeoutSeconds: 30

+ volumeMounts:

+ - mountPath: /tmp

+ name: tmp-volume

+ securityContext:

+ allowPrivilegeEscalation: false

+ readOnlyRootFilesystem: true

+ runAsUser: 1001

+ runAsGroup: 2001

+ serviceAccountName: kubernetes-dashboard

+ nodeSelector:

+ "kubernetes.io/os": linux

+ # Comment the following tolerations if Dashboard must not be deployed on master

+ tolerations:

+ - key: node-role.kubernetes.io/master

+ effect: NoSchedule

+ volumes:

+ - name: tmp-volume

+ emptyDir: {}

+```

+

+#### 3.创建证书

+

+```shell

+[root@k8s-master ~]# mkdir dashboard-certs

+[root@k8s-master ~]# cd dashboard-certs/

+#创建命名空间

+[root@k8s-master ~]# kubectl create namespace kubernetes-dashboard

+# 创建私钥key文件

+[root@k8s-master ~]# openssl genrsa -out dashboard.key 2048

+#证书请求

+[root@k8s-master ~]# openssl req -days 36000 -new -out dashboard.csr -key dashboard.key -subj '/CN=dashboard-cert'

+#自签证书

+[root@k8s-master ~]# openssl x509 -req -in dashboard.csr -signkey dashboard.key -out dashboard.crt

+#创建kubernetes-dashboard-certs对象

+[root@k8s-master ~]# kubectl create secret generic kubernetes-dashboard-certs --from-file=dashboard.key --from-file=dashboard.crt -n kubernetes-dashboard

+```

+

+#### 4.创建管理员

+

+```shell

+创建账户

+[root@k8s-master ~]# vim dashboard-admin.yaml

+apiVersion: v1

+kind: ServiceAccount

+metadata:

+ labels:

+ k8s-app: kubernetes-dashboard

+ name: dashboard-admin

+ namespace: kubernetes-dashboard

+#保存退出后执行

+[root@k8s-master ~]# kubectl create -f dashboard-admin.yaml

+为用户分配权限

+[root@k8s-master ~]# vim dashboard-admin-bind-cluster-role.yaml

+apiVersion: rbac.authorization.k8s.io/v1

+kind: ClusterRoleBinding

+metadata:

+ name: dashboard-admin-bind-cluster-role

+ labels:

+ k8s-app: kubernetes-dashboard

+roleRef:

+ apiGroup: rbac.authorization.k8s.io

+ kind: ClusterRole

+ name: cluster-admin

+subjects:

+- kind: ServiceAccount

+ name: dashboard-admin

+ namespace: kubernetes-dashboard

+#保存退出后执行

+[root@k8s-master ~]# kubectl create -f dashboard-admin-bind-cluster-role.yaml

+```

+

+#### 5.安装 Dashboard

+

+```shell

+[root@master dashboard-certs]# kubectl create -f dashboard.yaml

+```

+

+#### 6.获取token

+

+```shell

+[root@master dashboard-certs]# kubectl -n kubernetes-dashboard create token dashboard-admin

+eyJhbGciOiJSUzI1NiIsImtpZCI6InlBck13aTFMR2daR3htTmxSdG5XbGJjOVFLWmdMZlgzRU10TmJWRFNEMk0ifQ.eyJhdWQiOlsiaHR0cHM6Ly9rdWJlcm5ldGVzLmRlZmF1bHQuc3ZjLmNsdXN0ZXIubG9jYWwiXSwiZXhwIjoxNjYyMjE0MTA3LCJpYXQiOjE2NjIyMTA1MDcsImlzcyI6Imh0dHBzOi8va3ViZXJuZXRlcy5kZWZhdWx0LnN2Yy5jbHVzdGVyLmxvY2FsIiwia3ViZXJuZXRlcy5pbyI6eyJuYW1lc3BhY2UiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsInNlcnZpY2VhY2NvdW50Ijp7Im5hbWUiOiJkYXNoYm9hcmQtYWRtaW4iLCJ1aWQiOiIwOTRhYWI2NC05NTkyLTRjYTctOWI3MS0yNDEwMmI5ODA1YjcifX0sIm5iZiI6MTY2MjIxMDUwNywic3ViIjoic3lzdGVtOnNlcnZpY2VhY2NvdW50Omt1YmVybmV0ZXMtZGFzaGJvYXJkOmRhc2hib2FyZC1hZG1pbiJ9.MQfc83l08PsAqmBHRzwgE_TsZGct0Sul1lM7Ks0ssf29DtXt22u9hHisvaLmQ64sNsvb_D7r47kDDTxZPbIJP_A2mBuHFqy_dnryUmqlTj7KFJm4PdObwiMTlnBch-v7HqxJKLuA6XXLxtpNrbLWqqG47Bc2kvvcF4BzSkiDhe-s5L0PS-WY753QjV0C9v63G8KJDxkQEGVC4PjqfXSclLi_jvIe4n3UqhUNHPl85JWgBhJHTTAei3Ztp7IMweztR_P30p6BiXEF0Kmcv8Nb7Xsk2dx5avYyiTRZTpq4pBkvAMKlCbXyKufh78mil_oNdaA8Q_AeFWFwgDx9UrGoFA

+```

+

+#### 7.创建kubeconfig

+

+```shell

+获取certificate-authority-data:

+[root@master ~]# CURRENT_CONTEXT=$(kubectl config current-context)

+[root@master ~]# CURRENT_CLUSTER=$(kubectl config view --raw -o=go-template='{{range .contexts}}{{if eq .name "'''${CURRENT_CONTEXT}'''"}}{{ index .context "cluster" }}{{end}}{{end}}')

+[root@master ~]# kubectl config view --raw -o=go-template='{{range .clusters}}{{if eq .name "'''${CURRENT_CLUSTER}'''"}}"{{with index .cluster "certificate-authority-data" }}{{.}}{{end}}"{{ end }}{{ end }}'

+"LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUMvakNDQWVhZ0F3SUJBZ0lCQURBTkJna3Foa2lHOXcwQkFRc0ZBREFWTVJNd0VRWURWUVFERXdwcmRXSmwKY201bGRHVnpNQjRYRFRJeU1Ea3dNekEzTVRBeE0xb1hEVE15TURnek1UQTNNVEF4TTFvd0ZURVRNQkVHQTFVRQpBeE1LYTNWaVpYSnVaWFJsY3pDQ0FTSXdEUVlKS29aSWh2Y05BUUVCQlFBRGdnRVBBRENDQVFvQ2dnRUJBTVNZCnRxdjVwdlVaQVN3VHl0ODkrWGphM1ZuZFZnaWVCbFc1bEZVc2dzSklxa0tSNlV2cjVYcXEvWjNOaUVpUlBqT28KWkh4a1V5SWpqdUFTUXZuYzhrTXhvNjNQY3d2UUNEYzd3V1pQeVBxMDVobFZhUlhYK0hHNjlaRXozYkQrUmlObgpyTU5uSVZqeEI0ck56SWs0cGFUNjBZMU5hdWx0V01NbEFyMFM3ZC9YQ3ZMeVhBK0NCNVFmZ2xSQTFJZnJ3ZjNJCno3YS9iQ0M4Qk9Fak94QmllRCtra3JYWGJtdXlMUHpTZkdKUGNUajI1eGdjK2RvNDJZKzZ4UUVCK0ZTSnN6VWIKVzhyMkx5TkI2YjNaZlBZcjNIMXQ4RkxkeUxtTU9nR1M2RkpPMmpQWVVWR0RObURLUHlPZWJMVit4UXlvMW4rMQpYK0F5NzJ1b2JlbklESE54czhzQ0F3RUFBYU5aTUZjd0RnWURWUjBQQVFIL0JBUURBZ0trTUE4R0ExVWRFd0VCCi93UUZNQU1CQWY4d0hRWURWUjBPQkJZRUZMVXJSUnZXN2VrRlJhajRFRDFuWmVONXJzNVRNQlVHQTFVZEVRUU8KTUF5Q0NtdDFZbVZ5Ym1WMFpYTXdEUVlKS29aSWh2Y05BUUVMQlFBRGdnRUJBQ2lucC9OakRCOGhLbFhuMzJCTQorV0hLcTRTOHNCTFBFeFhJSzloUXdWNmVodWgyOEQzSEltOFlFUCtJazEybDUwNi90QlNpYllOYjV1dHYyVnBmCmltUEh2aFAvUzZmTFE4MXVIL2JaQytaMlJ3b3VvLzU4TkJoZVRhR2ozK2VXTzNnMDllaEhaaHVFajE4WWVtaDYKU0xhUU9SZWE2dEpHVjNlVURWUk44Tnc1aXp2T3AxZ2poVHdsNzJTL3JycmxoREo2dGM4VDlPaUFhOWNkeVlPWApLNVZNdlEwRWw3aDlLT3lmN3FsSWgxL0dhSWdqVUl4Z3FNQ1lIallKc0Jvd2g2eDB5d0tSYllJQkl4d1M0NDNECnRmYU5wOTBpUFVGS0Q3c2IvTWxGeDZpK3l3UjVnQUd3NWJWSEdIZTMrY0szNzlRd1R2NS8zdWlYQTlBUnhiVloKS0hNPQotLS0tLUVORCBDRVJUSUZJQ0FURS0tLS0tCg=="

+

+[root@master ~]# cat config

+apiVersion: v1

+clusters:

+- cluster:

+ certificate-authority-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUM1ekNDQWMrZ0F3SUJBZ0lCQURBTkJna3Foa2lHOXcwQkFRc0ZBREFWTVJNd0VRWURWUVFERXdwcmRXSmwKY201bGRHVnpNQjRYRFRJeE1EVXhNakV3TXpReU5Gb1hEVE14TURVeE1ERXdNelF5TkZvd0ZURVRNQkVHQTFVRQpBeE1LYTNWaVpYSnVaWFJsY3pDQ0FTSXdEUVlKS29aSWh2Y05BUUVCQlFBRGdnRVBBRENDQVFvQ2dnRUJBTFd5CmFZLy85Nlc5R2hxWWVBVER5cXhQV2tmVE1mSUxNMFkydy9RSm9SRDJWZmw1cjFNR25mRWNteG81bXc4Z1NXMmEKdVNmeml6dU41eDRMblBBYlVvbHdubkxMeWx3cENmTkRKMFRUVFFyOThuTmphWWQ0d2RmK09uZmtZQ1VaVG1NTwpYWDZBMEZJblFHTEpWQUdOb0xUUnVIR3F6dU0yNUd1Rkp5aXBoNlhRN0tHcmJFVFo0RXQyVWg3azV2UGxPVDQ3CkNEQjlDVkMra1c5MkdIRmNrTkViUU9kTUpPTkZXalh1K1lsSjlZdzNybzhJYU05QVR5SDFwNmNzaXNoejhybVQKUGZEUkl4cXpsNTVzenJNUHV5Y0JqZkVEZXFhcjQ2OXJyMFFGcWJ5NTdaaEtBcGMybTA0eTZ6ZllUQlB4cDhndwpXZ01ONktkcjU4bEFpOERwN3kwQ0F3RUFBYU5DTUVBd0RnWURWUjBQQVFIL0JBUURBZ0trTUE4R0ExVWRFd0VCCi93UUZNQU1CQWY4d0hRWURWUjBPQkJZRUZERTBCTVRreE5BYlpmMGZ6S2JwRm5ZUU94aXpNQTBHQ1NxR1NJYjMKRFFFQkN3VUFBNElCQVFCdE1yU281K2JlcEF2UGpwOUJ4TDlncnFtTnpOVEczRWFHZnZKUndoUE5qVXZ1bDZFZQpNenlIM3o3SERzYW5kSHJMU2xYMGZXZ2Y3TFRBSVRLV3duTDI3NjVzaTJ4L0prb2pCV0VySytGTEs1dGZXbmdmCnZ4cE13eE83RVNYd0FwVDdKWk9iVVA0eStPc3k2VzhQQXRjeHFGUHdyaVNhM29KYnZwZEFvcHloZXdoNUxNcUwKWkpobU4wK1RhTnlOaVJXaEkwcnZOSGYrNEdtcjhMd2lzMXZPdGZ4b2FtcGhPWE8wS0NheEJ0MWoxcDQxK2pNTwo0UUhlRWtFTkJndERzMUtuMTRhbFR6NWI1cFg0amwrU2tOOFk0ZlppUk9SRXJ4cWVmVkJEZ1Z0aWtQbEp5VFkyCmRQMkJOSE82R1FjalVvZmZBTmwwK1ZkblJTWGEvNVRsZU1PUwotLS0tLUVORCBDRVJUSUZJQ0FURS0tLS0tCg==

+ server: https://10.0.2.150:6443

+ name: kubernetes

+contexts:

+- context:

+ cluster: kubernetes

+ user: dashboard-admin

+ name: dashboard-admin@kubernetes

+current-context: dashboard-admin@kubernetes

+kind: Config

+preferences: {}

+users:

+- name: dashboard-admin

+ user:

+ token: eyJhbGciOiJSUzI1NiIsImtpZCI6InlBck13aTFMR2daR3htTmxSdG5XbGJjOVFLWmdMZlgzRU10TmJWRFNEMk0ifQ.eyJhdWQiOlsiaHR0cHM6Ly9rdWJlcm5ldGVzLmRlZmF1bHQuc3ZjLmNsdXN0ZXIubG9jYWwiXSwiZXhwIjoxNjYyMjE0MjcwLCJpYXQiOjE2NjIyMTA2NzAsImlzcyI6Imh0dHBzOi8va3ViZXJuZXRlcy5kZWZhdWx0LnN2Yy5jbHVzdGVyLmxvY2FsIiwia3ViZXJuZXRlcy5pbyI6eyJuYW1lc3BhY2UiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsInNlcnZpY2VhY2NvdW50Ijp7Im5hbWUiOiJkYXNoYm9hcmQtYWRtaW4iLCJ1aWQiOiIwOTRhYWI2NC05NTkyLTRjYTctOWI3MS0yNDEwMmI5ODA1YjcifX0sIm5iZiI6MTY2MjIxMDY3MCwic3ViIjoic3lzdGVtOnNlcnZpY2VhY2NvdW50Omt1YmVybmV0ZXMtZGFzaGJvYXJkOmRhc2hib2FyZC1hZG1pbiJ9.bHK9jgpCAQIAxwurh05zzndo22hWEzoDnfFRS3VDWAfoD0YOsTF6RbHFSshn0Vm-Xv1sEIgmVkjgftP2Pq_saMs-WdgHfTLz2CjxWpkYV4WQcMs4WJq9Lx5SQeNxw9mEh8c085nnx368GWkENHSsldKP-O6YliWQAP8qpOiUWrJqhteVQi0GD7EYmOPlnKFZF2YKaROYFvn9P8JiCL8rRTZ5GUYIty9LRLkh3daFXj67krk4v3pNLqdHcKKwkv8vFN4hl6RbgA3nY

+```

+