基于二进制构建Kubernetes高可用集群

作者:行癫(盗版必究)

------

## 一:环境介绍

#### 1.主机规划

| IP地址 | 主机名 | 主机配置 | 主机角色 | 软件列表 |

| :---------: | :---------------------------: | :------: | :------: | :----------------------------------------------------------: |

| 10.9.12.60 | xingdiancloud-native-master-a | 2C4G | master | kube-apiserver、kube-controller-manager、kube-scheduler、etcd、kubectl、haproxy、keepalive |

| 10.9.12.64 | xingdiancloud-native-master-b | 2C4G | master | kube-apiserver、kube-controller-manager、kube-scheduler、etcd、kubectl、haproxy、keepalive |

| 10.9.12.66 | xingdiancloud-native-node-a | 2C4G | worker | kubelet、kube-proxy、docker |

| 10.9.12.65 | xingdiancloud-native-node-b | 2C4G | worker | kubelet、kube-proxy、docker |

| 10.9.12.67 | xingdiancloud-native-node-c | 2C4G | worker | kubelet、kube-proxy、docker |

| 10.9.12.100 | / | / | VIP | |

#### 2.软件版本

| 软件名称 | 版本 | 备注 |

| :--------: | :-----: | :-------: |

| CentOS | 7.9 | |

| kubernetes | v1.28.0 | |

| etcd | v3.5.11 | |

| calico | v3.26.4 | |

| coredns | v1.10.1 | |

| docker | 24.0.7 | |

| haproxy | 5.18 | YUM源默认 |

| keepalived | 3.5 | YUM源默认 |

#### 3.网络分配

| 网络名称 | 网段 | 备注 |

| :---------: | :-----------: | :--: |

| Node网络 | 10.9.12.0/24 | |

| Service网络 | 10.96.0.0/16 | |

| Pod网络 | 10.244.0.0/16 | |

## 二:集群准备

#### 1.修改主机名

```shell

[root@xingdiancloud-native-master-a ~]# nmcli g hostname xingdiancloud-native-master-a

```

备注:

所有节点按照规划一次修改

#### 2.地址解析

```shell

[root@xingdiancloud-native-master-a ~]# cat >> /etc/hosts << EOF

10.9.12.60 xingdiancloud-native-master-a

10.9.12.64 xingdiancloud-native-master-b

10.9.12.66 xingdiancloud-native-node-a

10.9.12.65 xingdiancloud-native-node-b

10.9.12.67 xingdiancloud-native-node-c

EOF

```

备注:

所有节点按照规划一次修改

#### 3.防火墙和Selinux

全部关闭及永久关闭

此处略

备注:

所有节点按照规划一次修改

#### 4.交换分区设置

```shell

[root@xingdiancloud-native-master-a ~]# swapoff -a

[root@xingdiancloud-native-master-a ~]# sed -ri 's/.*swap.*/#&/' /etc/fstab

[root@xingdiancloud-native-master-a ~]# echo "vm.swappiness=0" >> /etc/sysctl.conf

[root@xingdiancloud-native-master-a ~]# sysctl -p

```

备注:

所有节点按照规划一次修改

#### 5.时间同步

```shell

[root@xingdiancloud-native-master-a ~]# yum -y install ntpdate

[root@xingdiancloud-native-master-a ~]# ntpdate -b ntp.aliyun.com

制定时间同步计划任务

[root@xingdiancloud-native-master-a ~]# crontab -e

0 */5 * * * /usr/sbin/ntpdate -b ntp.aliyun.com

```

备注:

所有节点按照规划一次修改

#### 6.ipvs管理工具安装及模块加载

```shell

[root@xingdiancloud-native-master-a ~]# yum -y install ipvsadm ipset sysstat conntrack libseccomp

#配置ipvasdm模块加载方式

#添加需要加载的模块

[root@xingdiancloud-native-master-a ~]# cat > /etc/sysconfig/modules/ipvs.modules << EOF

#!/bin/bash

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack

EOF

#授权,运行,检查是否加载

[root@xingdiancloud-native-master-a ~]# chmod 755 /etc/sysconfig/modules/ipvs.modules && bash /etc/sysconfig/modules/ipvs.modules && lsmod | grep -e ip_vs -e nf_conntrack

```

备注:

所有节点按照规划一次修改

#### 7.Linux内核优化

添加网桥过滤及内核转发配置文件

```shell

[root@xingdiancloud-native-master-a ~]# cat > /etc/sysctl.d/k8s.conf < /etc/modules-load.d/containerd.conf << EOF

overlay

br_netfilter

EOF

[root@xingdiancloud-native-master-a ~]# systemctl enable --now systemd-modules-load.service

```

查看是否加载

```shell

[root@xingdiancloud-native-master-a ~]# lsmod | grep br_netfilter

br_netfilter 28672 0

```

#### 8.配置免密

在xingdiancloud-native-master-a上操作即可,复制公钥到其他节点

```shell

[root@xingdiancloud-native-master-a ~]# ssh-keygen

Generating public/private rsa key pair.

Enter file in which to save the key (/root/.ssh/id_rsa):

Created directory '/root/.ssh'.

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /root/.ssh/id_rsa.

Your public key has been saved in /root/.ssh/id_rsa.pub.

The key fingerprint is:

SHA256:40/tHc966yq63YQ8YK84udBoZMqkCeZB5XTj8QaSOZo root@k8s-master1

The key's randomart image is:

+---[RSA 2048]----+

| +o= |

| +++ = |

| .o... o |

|.E . |

|.o . o So |

|+ * + o...+.. |

| + o + .o .=... |

| . .o.ooo+. +.|

| oo++.oo==+|

+----[SHA256]-----+

[root@xingdiancloud-native-master-a ~]# ssh-copy-id root@xingdiancloud-native-master-a

[root@xingdiancloud-native-master-a ~]# ssh-copy-id root@xingdiancloud-native-node-b

[root@xingdiancloud-native-master-a ~]# ssh-copy-id root@xingdiancloud-native-node-a

[root@xingdiancloud-native-master-a ~]# ssh-copy-id root@xingdiancloud-native-node-c

```

## 三:部署负载均衡高可用

#### 1.安装haproxy与keepalived

在HA部署的节点上运行,本次HA部署在xingdiancloud-master-a,xingdiancloud-master-b上

```

[root@xingdiancloud-native-master-a ~]# yum -y install haproxy keepalived

```

#### 2.HAProxy配置

在HA部署的节点上运行, HAProxy配置所有节点相同

```shell

[root@xingdiancloud-native-master-a ~]# cat >/etc/haproxy/haproxy.cfg<<"EOF"

global

maxconn 2000

ulimit-n 16384

log 127.0.0.1 local0 err

stats timeout 30s

defaults

log global

mode http

option httplog

timeout connect 5000

timeout client 50000

timeout server 50000

timeout http-request 15s

timeout http-keep-alive 15s

frontend monitor-in

bind *:33305

mode http

option httplog

monitor-uri /monitor

frontend xingdiancloud-master

bind 0.0.0.0:6443

bind 127.0.0.1:6443

mode tcp

option tcplog

tcp-request inspect-delay 5s

default_backend xingdiancloud-master

backend xingdiancloud-master

mode tcp

option tcplog

option tcp-check

balance roundrobin

default-server inter 10s downinter 5s rise 2 fall 2 slowstart 60s maxconn 250 maxqueue 256 weight 100

server xingdiancloud-master-a 10.9.12.60:6442 check

server xingdiancloud-master-b 10.9.12.64:6442 check

EOF

```

#### 3.KeepAlived配置

主从配置不一致,需要注意

Master:

```shell

[root@xingdiancloud-native-master-a ~]# cat >/etc/keepalived/keepalived.conf<<"EOF"

! Configuration File for keepalived

global_defs {

router_id LVS_DEVEL

script_user root

enable_script_security

}

vrrp_script chk_apiserver {

script "/etc/keepalived/check_apiserver.sh"

interval 5

weight -5

fall 2

rise 1

}

vrrp_instance VI_1 {

state MASTER

interface ens3

mcast_src_ip 10.9.12.60

virtual_router_id 51

priority 100

advert_int 2

authentication {

auth_type PASS

auth_pass K8SHA_KA_AUTH

}

virtual_ipaddress {

10.9.12.100

}

track_script {

chk_apiserver

}

}

EOF

```

Backup:

```shell

[root@xingdiancloud-native-master-b ~]# cat >/etc/keepalived/keepalived.conf<<"EOF"

! Configuration File for keepalived

global_defs {

router_id LVS_DEVEL

script_user root

enable_script_security

}

vrrp_script chk_apiserver {

script "/etc/keepalived/check_apiserver.sh"

interval 5

weight -5

fall 2

rise 1

}

vrrp_instance VI_1 {

state BACKUP

interface ens3

mcast_src_ip 10.9.12.64

virtual_router_id 51

priority 99

advert_int 2

authentication {

auth_type PASS

auth_pass K8SHA_KA_AUTH

}

virtual_ipaddress {

10.9.12.100

}

track_script {

chk_apiserver

}

}

EOF

```

#### 4.健康检测脚本

Master和Backup节点均要有

```shell

[root@xingdiancloud-native-master-a ~]# cat > /etc/keepalived/check_apiserver.sh <<"EOF"

#!/bin/bash

err=0

for k in $(seq 1 2)

do

check_code=$(pgrep haproxy)

if [[ $check_code == "" ]]; then

err=$(expr $err + 1)

sleep 1

continue

else

err=0

break

fi

done

if [[ $err != "0" ]]; then

echo "systemctl stop keepalived"

/usr/bin/systemctl stop keepalived

exit 1

else

exit 0

fi

EOF

[root@xingdiancloud-native-master-a ~]# chmod +x /etc/keepalived/check_apiserver.sh

```

#### 5.启动服务并验证

```shell

[root@xingdiancloud-native-master-a ~]# systemctl daemon-reload

[root@xingdiancloud-native-master-a ~]# systemctl enable --now haproxy

[root@xingdiancloud-native-master-a ~]# systemctl enable --now keepalived

```

注意:

依次启动Master节点和Backup节点

验证VIP:

```shell

[root@xingdiancloud-native-master-a ~]# ip a s

1: lo: mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 00:0c:29:09:7a:32 brd ff:ff:ff:ff:ff:ff

inet 10.9.12.60/24 brd 192.168.198.255 scope global noprefixroute ens33

valid_lft forever preferred_lft forever

inet 10.9.12.100/32 scope global ens33

valid_lft forever preferred_lft forever

inet6 fe80::6d0d:5af:b421:6829/64 scope link noprefixroute

valid_lft forever preferred_lft forever

inet6 fe80::2dcd:beb6:b077:827d/64 scope link tentative noprefixroute dadfailed

valid_lft forever preferred_lft forever

```

测试网页是否正常显示:

## 四:ETCD集群部署

注意:

以下操作在xingdiancloud-native-master-a上操作

#### 1.创建工作目录

```shell

[root@xingdiancloud-native-master-a ~]# mkdir -p /data/k8s-work

```

#### 2.安装cfssl工具

https://github.com/cloudflare/cfssl/releases

```shell

[root@xingdiancloud-native-master-a k8s-work]# ll

total 40232

-rw-r--r-- 1 root root 16659824 Mar 9 2022 cfssl_1.6.1_linux_amd64

-rw-r--r-- 1 root root 13502544 Mar 9 2022 cfssl-certinfo_1.6.1_linux_amd64

-rw-r--r-- 1 root root 11029744 Mar 9 2022 cfssljson_1.6.1_linux_amd64

# 授权可执行权限

[root@xingdiancloud-native-master-a k8s-work]# chmod +x cfssl*

[root@xingdiancloud-native-master-a k8s-work]# ll

total 40232

-rwxr-xr-x 1 root root 16659824 Mar 9 2022 cfssl_1.6.1_linux_amd64

-rwxr-xr-x 1 root root 13502544 Mar 9 2022 cfssl-certinfo_1.6.1_linux_amd64

-rwxr-xr-x 1 root root 11029744 Mar 9 2022 cfssljson_1.6.1_linux_amd64

# 修改名称,放到/usr/local/bin目录下

[root@xingdiancloud-native-master-a k8s-work]# mv cfssl_1.6.1_linux_amd64 /usr/local/bin/cfssl

[root@xingdiancloud-native-master-a k8s-work]# mv cfssl-certinfo_1.6.1_linux_amd64 /usr/local/bin/cfssl-certinfo

[root@xingdiancloud-native-master-a k8s-work]# mv cfssljson_1.6.1_linux_amd64 /usr/local/bin/cfssljson

# 安装完成,查看cfssl版本

[root@k8s-master1 k8s-work]# cfssl version

Version: 1.6.1

Runtime: go1.12.12

```

#### 3.创建CA证书

注意:

CA作为证书颁发机构

xingdiancloud-native-master-a 节点

##### 3.1 配置ca证书请求文件

```shell

[root@xingdiancloud-native-master-a k8s-work]# cat > ca-csr.json <<"EOF"

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "kubemsb",

"OU": "CN"

}

],

"ca": {

"expiry": "87600h"

}

}

EOF

```

##### 3.2 创建ca证书

```shell

[root@xingdiancloud-native-master-a k8s-work]# cfssl gencert -initca ca-csr.json | cfssljson -bare ca

2024/01/04 09:22:43 [INFO] generating a new CA key and certificate from CSR

2024/01/04 09:22:43 [INFO] generate received request

2024/01/04 09:22:43 [INFO] received CSR

2024/01/04 09:22:43 [INFO] generating key: rsa-2048

2024/01/04 09:22:43 [INFO] encoded CSR

2024/01/04 09:22:43 [INFO] signed certificate with serial number 338731219198113317417686336532940600662573621163

#输出ca.csr ca-key.pem ca.pem

[root@xingdiancloud-native-master-a k8s-work]# ll

total 16

-rw-r--r-- 1 root root 1045 Jan 4 09:22 ca.csr

-rw-r--r-- 1 root root 256 Jan 4 09:22 ca-csr.json

-rw------- 1 root root 1679 Jan 4 09:22 ca-key.pem

-rw-r--r-- 1 root root 1310 Jan 4 09:22 ca.pem

```

##### 3.3 配置ca证书策略

```shell

[root@xingdiancloud-native-master-a k8s-work]# cfssl print-defaults config > ca-config.json

cat > ca-config.json <<"EOF"

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "87600h"

}

}

}

}

EOF

```

#### 4.创建ETCD证书

##### 4.1 配置etcd请求文件

注意:

57-59为预留IP

```

[root@xingdiancloud-native-master-a k8s-work]# cat > etcd-csr.json <<"EOF"

{

"CN": "etcd",

"hosts": [

"127.0.0.1",

"10.9.12.64",

"10.9.12.60",

"10.9.12.59",

"10.9.12.58",

"10.9.12.57"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "kubemsb",

"OU": "CN"

}]

}

EOF

```

##### 4.2 生成etcd证书

```shell

[root@xingdiancloud-native-master-a k8s-work]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes etcd-csr.json | cfssljson -bare etcd

2024/01/04 10:18:44 [INFO] generate received request

2024/01/04 10:18:44 [INFO] received CSR

2024/01/04 10:18:44 [INFO] generating key: rsa-2048

2024/01/04 10:18:44 [INFO] encoded CSR

2024/01/04 10:18:44 [INFO] signed certificate with serial number 615580008866301102078218902811936499168508210128

```

注意:

生成etcd.csr、etcd-key.pem、etcd.pem

#### 5.部署ETCD集群

##### 5.1 下载etcd软件包

```

https://github.com/etcd-io/etcd/releases/download/v3.5.11/etcd-v3.5.11-linux-amd64.tar.gz

```

##### 5.2 安装etcd软件

```shell

#解压etcd源码包

[root@xingdiancloud-native-master-a k8s-work]# tar -xf etcd-v3.5.11-linux-amd64.tar.gz

[root@xingdiancloud-native-master-a k8s-work]# cd etcd-v3.5.11-linux-amd64

[root@xingdiancloud-native-master-a etcd-v3.5.11-linux-amd64]# ll

total 54896

drwxr-xr-x 3 528287 89939 40 Dec 7 18:30 Documentation

-rwxr-xr-x 1 528287 89939 23535616 Dec 7 18:30 etcd

-rwxr-xr-x 1 528287 89939 17739776 Dec 7 18:30 etcdctl

-rwxr-xr-x 1 528287 89939 14864384 Dec 7 18:30 etcdutl

-rw-r--r-- 1 528287 89939 42066 Dec 7 18:30 README-etcdctl.md

-rw-r--r-- 1 528287 89939 7359 Dec 7 18:30 README-etcdutl.md

-rw-r--r-- 1 528287 89939 9394 Dec 7 18:30 README.md

-rw-r--r-- 1 528287 89939 7896 Dec 7 18:30 READMEv2-etcdctl.md

#把etcd执行文件拷贝到/usr/local/bin目录下,后面配置文件都指定在这个文件执行命令

[root@xingdiancloud-native-master-a etcd-v3.5.11-linux-amd64]# cp etcd* /usr/local/bin/

#分发到其他节点

[root@xingdiancloud-native-master-a etcd-v3.5.11-linux-amd64]# scp etcd* xingdiancloud-native-master-b:/usr/local/bin/

etcd 100% 22MB 53.1MB/s 00:00

etcdctl 100% 17MB 48.6MB/s 00:00

etcdutl 100% 14MB 60.6MB/s 00:00

```

##### 5.3 创建配置文件

```shell

[root@xingdiancloud-native-master-a k8s-work]# mkdir /etc/etcd

```

xingdiancloud-native-master-a 配置:

```shell

[root@xingdiancloud-native-master-a k8s-work]# cat > /etc/etcd/etcd.conf < /etc/etcd/etcd.conf <<"EOF"

#[Member]

ETCD_NAME="etcd2"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://10.9.12.64:2380"

ETCD_LISTEN_CLIENT_URLS="https://10.9.12.64:2379,http://127.0.0.1:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://10.9.12.64:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://10.9.12.64:2379"

ETCD_INITIAL_CLUSTER="etcd1=https://10.9.12.60:2380,etcd2=https://10.9.12.64:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

EOF

```

##### 5.4 创建服务配置文件

```shell

[root@xingdiancloud-native-master-a k8s-work]# mkdir -p /etc/etcd/ssl

[root@xingdiancloud-native-master-a k8s-work]# mkdir -p /var/lib/etcd/default.etcd

```

```shell

[root@xingdiancloud-native-master-a etcd]# cd /data/k8s-work

[root@xingdiancloud-native-master-a k8s-work]# ll

total 19896

-rw-r--r-- 1 root root 356 Jan 4 10:03 ca-config.json

-rw-r--r-- 1 root root 1045 Jan 4 09:22 ca.csr

-rw-r--r-- 1 root root 256 Jan 4 09:22 ca-csr.json

-rw------- 1 root root 1679 Jan 4 09:22 ca-key.pem

-rw-r--r-- 1 root root 1310 Jan 4 09:22 ca.pem

-rw-r--r-- 1 root root 1078 Jan 4 10:18 etcd.csr

-rw-r--r-- 1 root root 331 Jan 4 10:16 etcd-csr.json

-rw------- 1 root root 1679 Jan 4 10:18 etcd-key.pem

-rw-r--r-- 1 root root 1452 Jan 4 10:18 etcd.pem

drwxr-xr-x 3 528287 89939 163 Dec 7 18:30 etcd-v3.5.11-linux-amd64

-rw-r--r-- 1 root root 20334735 Dec 7 18:36 etcd-v3.5.11-linux-amd64.tar.gz

#拷贝生成的etcd,ca证书到对应ssl目录

[root@xingdiancloud-native-master-a k8s-work]# cp ca*.pem /etc/etcd/ssl

[root@xingdiancloud-native-master-a k8s-work]# cp etcd*.pem /etc/etcd/ssl

#分发证书到其他节点

[root@xingdiancloud-native-master-a k8s-work]# scp ca*.pem xingdiancloud-native-master-b:/etc/etcd/ssl

ca-key.pem 100% 1679 1.4MB/s 00:00

ca.pem 100% 1310 1.0MB/s 00:00

[root@xingdiancloud-native-master-a k8s-work]# scp etcd*.pem xingdiancloud-native-master-b:/etc/etcd/ssl

etcd-key.pem 100% 1679 1.1MB/s 00:00

etcd.pem 100% 1452 1.2MB/s 00:00

```

##### 5.5 生成etcd启动文件

注意:

所有etcd

```shell

[root@xingdiancloud-native-master-a k8s-work]# cat > /etc/systemd/system/etcd.service <<"EOF"

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=-/etc/etcd/etcd.conf

WorkingDirectory=/var/lib/etcd/

ExecStart=/usr/local/bin/etcd \

--cert-file=/etc/etcd/ssl/etcd.pem \

--key-file=/etc/etcd/ssl/etcd-key.pem \

--trusted-ca-file=/etc/etcd/ssl/ca.pem \

--peer-cert-file=/etc/etcd/ssl/etcd.pem \

--peer-key-file=/etc/etcd/ssl/etcd-key.pem \

--peer-trusted-ca-file=/etc/etcd/ssl/ca.pem \

--peer-client-cert-auth \

--client-cert-auth

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

```

##### 5.6 启动etcd集群

注意:

依次启动

```shell

[root@xingdiancloud-native-master-a k8s-work]# systemctl daemon-reload

[root@xingdiancloud-native-master-a k8s-work]# systemctl enable --now etcd.service

[root@xingdiancloud-native-master-a k8s-work]# systemctl status etcd

● etcd.service - Etcd Server

Loaded: loaded (/etc/systemd/system/etcd.service; enabled; vendor preset: disabled)

Active: active (running) since Thu 2024-01-04 11:21:03 CST; 1min 15s ago

Main PID: 4515 (etcd)

CGroup: /system.slice/etcd.service

└─4515 /usr/local/bin/etcd --cert-file=/etc/etcd/ssl/etcd.pem --key-file=/etc/etcd/ssl/etcd-key.pem --trusted-ca-file=/etc/etcd/ssl/ca.pem --peer-cert...

Jan 04 11:21:03 xingdiancloud-native-master-a etcd[4515]: {"level":"info","ts":"2024-01-04T11:21:03.193129+0800","caller":"api/capability.go:75","msg":"enabled capabilit...on":"3.0"}

Jan 04 11:21:03 xingdiancloud-native-master-a etcd[4515]: {"level":"info","ts":"2024-01-04T11:21:03.19358+0800","caller":"etcdserver/server.go:2066","msg":"published local member ...

Jan 04 11:21:03 xingdiancloud-native-master-a etcd[4515]: {"level":"info","ts":"2024-01-04T11:21:03.1937+0800","caller":"embed/serve.go:103","msg":"ready to serve client requests"}

Jan 04 11:21:03 xingdiancloud-native-master-a etcd[4515]: {"level":"info","ts":"2024-01-04T11:21:03.194023+0800","caller":"embed/serve.go:103","msg":"ready to serve client requests"}

Jan 04 11:21:03 xingdiancloud-native-master-a etcd[4515]: {"level":"info","ts":"2024-01-04T11:21:03.194473+0800","caller":"embed/serve.go:187","msg":"serving client traf...0.1:2379"}

Jan 04 11:21:03 xingdiancloud-native-master-a systemd[1]: Started Etcd Server.

Jan 04 11:21:03 xingdiancloud-native-master-a etcd[4515]: {"level":"info","ts":"2024-01-04T11:21:03.195005+0800","caller":"etcdmain/main.go:44","msg":"notifying init daemon"}

Jan 04 11:21:03 xingdiancloud-native-master-a etcd[4515]: {"level":"info","ts":"2024-01-04T11:21:03.195052+0800","caller":"etcdmain/main.go:50","msg":"successfully notif...t daemon"}

Jan 04 11:21:03 xingdiancloud-native-master-a etcd[4515]: {"level":"info","ts":"2024-01-04T11:21:03.196251+0800","caller":"embed/serve.go:250","msg":"serving client traf...146:2379"}

Jan 04 11:21:04 xingdiancloud-native-master-a etcd[4515]: {"level":"info","ts":"2024-01-04T11:21:04.065509+0800","caller":"membership/cluster.go:576","msg":"updated clus...to":"3.5"}

Hint: Some lines were ellipsized, use -l to show in full.

```

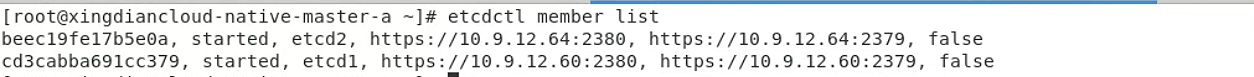

##### 5.7 验证集群状态

```

#IS LEADER 为true的为主节点

[xingdiancloud-native-master-a etcd]# ETCDCTL_API=3 /usr/local/bin/etcdctl --write-out=table --cacert=/etc/etcd/ssl/ca.pem --cert=/etc/etcd/ssl/etcd.pem --key=/etc/etcd/ssl/etcd-key.pem --endpoints=https://10.9.12.60:2379,https://10.9.12.64:2379 endpoint status

+------------------------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| ENDPOINT | ID | VERSION | DB SIZE | IS LEADER | IS LEARNER | RAFT TERM | RAFT INDEX | RAFT APPLIED INDEX | ERRORS |

+------------------------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| https://10.9.12.60:2379 | f79986bfdb812e09 | 3.5.11 | 20 kB | true | false | 2 | 9 | 9 | |

| https://10.9.12.64:2379 | 3ed6f5bbee8d7853 | 3.5.11 | 20 kB | false | false | 2 | 9 | 9 | |

+------------------------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

```

## 五:Kubernetes集群部署

#### 1.Kubernetes软件包下载

```shell

[root@xingdiancloud-native-master-a k8s-work]# wget https://dl.k8s.io/v1.28.0/kubernetes-server-linux-amd64.tar.gz

```

#### 2.Kubernetes软件包安装

```shell

[root@xingdiancloud-native-master-a k8s-work]# tar -xvf kubernetes-server-linux-amd64.tar.gz

[root@xingdiancloud-native-master-a k8s-work]# cd kubernetes/server/bin/

[root@xingdiancloud-native-master-a bin]# cp kube-apiserver kube-controller-manager kube-scheduler kubectl /usr/local/bin/

```

#### 3.Kubernetes软件分发

```shell

[root@xingdiancloud-native-master-a bin]# scp kube-apiserver kube-controller-manager kube-scheduler kubectl xingdiancloud-native-master-b:/usr/local/bin/

```

#### 4.在集群节点上创建目录

注意:

Master节点创建

```shell

[root@xingdiancloud-native-master-a bin]# mkdir -p /etc/kubernetes/

[root@xingdiancloud-native-master-a bin]# mkdir -p /etc/kubernetes/ssl

[root@xingdiancloud-native-master-a bin]# mkdir -p /var/log/kubernetes

```

#### 5.部署api-server

##### 5.1 创建apiserver证书请求文件

```shell

[root@xingdiancloud-native-master-a k8s-work]# cat > kube-apiserver-csr.json << "EOF"

{

"CN": "kubernetes",

"hosts": [

"127.0.0.1",

"10.9.12.60",

"10.9.12.64",

"10.9.12.66",

"10.9.12.67",

"10.9.12.65",

"10.9.12.59",

"10.9.12.58",

"10.9.12.57",

"10.9.12.100",

"10.96.0.1",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "kubemsb",

"OU": "CN"

}

]

}

EOF

```

##### 5.2 生成apiserver证书及token文件

注意:

生成kube-apiserver.csr、kube-apiserver-key.pem、kube-apiserver.pem

```shell

[root@xingdiancloud-native-master-a k8s-work]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-apiserver-csr.json | cfssljson -bare kube-apiserver

```

生成token.csv

```shell

[root@xingdiancloud-native-master-a k8s-work]# cat > token.csv << EOF

$(head -c 16 /dev/urandom | od -An -t x | tr -d ' '),kubelet-bootstrap,10001,"system:kubelet-bootstrap"

EOF

```

##### 5.3 创建apiserver服务配置文件

```shell

[root@xingdiancloud-native-master-a k8s-work]# cat > /etc/kubernetes/kube-apiserver.conf << "EOF"

KUBE_APISERVER_OPTS="--enable-admission-plugins=NamespaceLifecycle,NodeRestriction,LimitRanger,ServiceAccount,DefaultStorageClass,ResourceQuota \

--anonymous-auth=false \

--bind-address=10.9.12.60 \

--advertise-address=10.9.12.60 \

--secure-port=6442 \

--authorization-mode=Node,RBAC \

--runtime-config=api/all=true \

--enable-bootstrap-token-auth \

--service-cluster-ip-range=10.96.0.0/16 \

--token-auth-file=/etc/kubernetes/token.csv \

--service-node-port-range=30000-32767 \

--tls-cert-file=/etc/kubernetes/ssl/kube-apiserver.pem \

--tls-private-key-file=/etc/kubernetes/ssl/kube-apiserver-key.pem \

--client-ca-file=/etc/kubernetes/ssl/ca.pem \

--kubelet-client-certificate=/etc/kubernetes/ssl/kube-apiserver.pem \

--kubelet-client-key=/etc/kubernetes/ssl/kube-apiserver-key.pem \

--service-account-key-file=/etc/kubernetes/ssl/ca-key.pem \

--service-account-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \

--service-account-issuer=api \

--etcd-cafile=/etc/etcd/ssl/ca.pem \

--etcd-certfile=/etc/etcd/ssl/etcd.pem \

--etcd-keyfile=/etc/etcd/ssl/etcd-key.pem \

--etcd-servers=https://10.9.12.60:2379,https://10.9.12.64:2379 \

--allow-privileged=true \

--apiserver-count=3 \

--audit-log-maxage=30 \

--audit-log-maxbackup=3 \

--audit-log-maxsize=100 \

--audit-log-path=/var/log/kube-apiserver-audit.log \

--event-ttl=1h \

--v=4"

EOF

```

##### 5.4 创建apiserver服务管理配置文件

```shell

[root@xingdiancloud-native-master-a k8s-work]# cat > /etc/systemd/system/kube-apiserver.service << "EOF"

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

After=etcd.service

Wants=etcd.service

[Service]

EnvironmentFile=-/etc/kubernetes/kube-apiserver.conf

ExecStart=/usr/local/bin/kube-apiserver $KUBE_APISERVER_OPTS

Restart=on-failure

RestartSec=5

Type=notify

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

```

##### 5.5 同步文件到集群master节点

```shell

[root@xingdiancloud-native-master-a k8s-work]# cp ca*.pem /etc/kubernetes/ssl/

[root@xingdiancloud-native-master-a k8s-work]# cp kube-apiserver*.pem /etc/kubernetes/ssl/

[root@xingdiancloud-native-master-a k8s-work]# cp token.csv /etc/kubernetes/

[root@xingdiancloud-native-master-a k8s-work]# scp /etc/kubernetes/ssl/ca*.pem xingdiancloud-native-master-b:/etc/kubernetes/ssl

[root@xingdiancloud-native-master-a k8s-work]# scp /etc/kubernetes/ssl/kube-apiserver*.pem xingdiancloud-native-master-b:/etc/kubernetes/ssl

[root@xingdiancloud-native-master-a k8s-work]# scp /etc/kubernetes/token.csv xingdiancloud-native-master-b:/etc/kubernetes

```

需要修改为对应主机的ip地址

```shell

[root@xingdiancloud-native-master-a k8s-work]# scp /etc/kubernetes/kube-apiserver.conf xingdiancloud-native-master-b:/etc/kubernetes/kube-apiserver.conf

```

拷贝启动文件

```shell

[root@xingdiancloud-native-master-a k8s-work]# scp /etc/systemd/system/kube-apiserver.service xingdiancloud-native-master-b:/etc/systemd/system/kube-apiserver.service

```

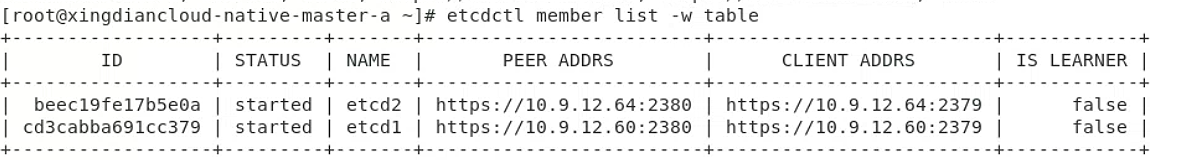

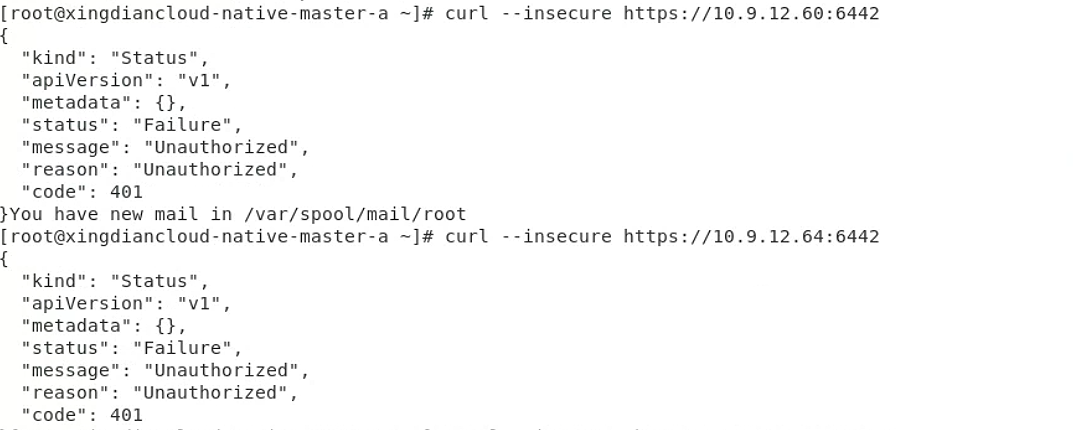

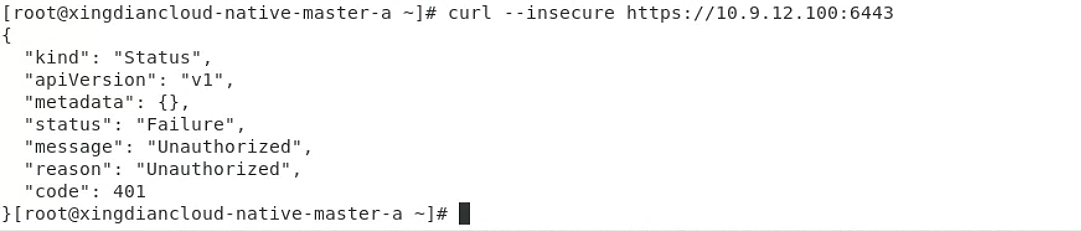

##### 5.6 启动apiserver服务

注意:

依次启动 apiserver 服务

```shell

[root@xingdiancloud-native-master-a k8s-work]# systemctl daemon-reload

[root@xingdiancloud-native-master-a k8s-work]# systemctl enable --now kube-apiserver

[root@xingdiancloud-native-master-a k8s-work]# systemctl status kube-apiserver

# 测试

[root@xingdiancloud-native-master-a k8s-work]# curl --insecure https://110.9.12.60:6442/

[root@xingdiancloud-native-master-a k8s-work]# curl --insecure https://10.9.12.64:6442/

```

#### 6.部署kubectl

##### 6.1 创建kubectl证书请求文件

```shell

[root@xingdiancloud-native-master-a k8s-work]# cat > admin-csr.json << "EOF"

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:masters",

"OU": "system"

}

]

}

EOF

```

##### 6.2 生成证书文件

```shell

[root@xingdiancloud-native-master-a k8s-work]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

```

##### 6.3 复制文件到指定目录

```shell

[root@xingdiancloud-native-master-a k8s-work]# cp admin*.pem /etc/kubernetes/ssl/

```

##### 6.4 生成kube.config配置文件

`kube.config`包含访问apiserver的所有信息,如 apiserver 地址、CA 证书和自身使用的证书

```shell

[root@xingdiancloud-native-master-a k8s-work]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://10.9.12.100:6443 --kubeconfig=kube.config

Cluster "kubernetes" set.

[root@xingdiancloud-native-master-a k8s-work]# cat kube.config

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSURtakNDQW9LZ0F3SUJBZ0lVYTZieUMxOFA3SW5kTmJ6L1RaOW56emk5RGVJd0RRWUpLb1pJaHZjTkFRRUwKQlFBd1pURUxNQWtHQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbAphV3BwYm1jeEVEQU9CZ05WQkFvVEIydDFZbVZ0YzJJeEN6QUpCZ05WQkFzVEFrTk9NUk13RVFZRFZRUURFd3ByCmRXSmxjbTVsZEdWek1CNFhEVEkwTURZeE1ERTBNelF3TUZvWERUTTBNRFl3T0RFME16UXdNRm93WlRFTE1Ba0cKQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbGFXcHBibWN4RURBTwpCZ05WQkFvVEIydDFZbVZ0YzJJeEN6QUpCZ05WQkFzVEFrTk9NUk13RVFZRFZRUURFd3ByZFdKbGNtNWxkR1Z6Ck1JSUJJakFOQmdrcWhraUc5dzBCQVFFRkFBT0NBUThBTUlJQkNnS0NBUUVBc1NvbDFLdk5KanVYR0dydEFVNzAKcjZtdHJYdE5ZL3hBNnM2eVYzNUFKeS9JVXIyL1BESmpLbWVkTWJDa1NFZk1aT1FaREYrSFhyNmF2eDJHSEM2eAoxUGlGYTFTU3lKS1EzNnhPbllrTTZSbFZ1SEpWSlZNaHJUbEdsbVFtSzMxMmFxQVc2ZDhJWlNURitGQWVHbVlrCkJlWTlNdTFwRURhUTZ5eVVEV0d0ckE0azNBd1J2WTRHZ3BMVDNKY2JSQTJGTTFwQ2x5MjZlUEV2bzZHUG9kVE4KbmxmMVZ6RnB1amRoYTBIWkZ1QnVGODZuWTA2dHU3SjFOWDVSY0orUkUvaXBQcmxxQ2d6NlNTVWhidXJPeVNkVQozNWl2R2xUM05nUmxyUEdSSi9zaWJiTWZPaytWWk00b3JLZ1pPR2NrUTY4ekFtR2hqRklnKzVjMEFMenZnVGFqCjhRSURBUUFCbzBJd1FEQU9CZ05WSFE4QkFmOEVCQU1DQVFZd0R3WURWUjBUQVFIL0JBVXdBd0VCL3pBZEJnTlYKSFE0RUZnUVVoU2FRNGpNV1ZNK0ZKTUx3a3RYOFdyMkx6Zjh3RFFZSktvWklodmNOQVFFTEJRQURnZ0VCQUVuRwpRTStJMFlTejdMUEl4WlQrZE1xS3dLNDNyQmRsaExWbXBYSWJGM0FQVGdXeG5EOXVHOTBUZUcrSlVhbjZ5MEhwCitCWjlhNEdFbXo0ZGZHTlBrWFNqT2dDZElXb1IvUVF2eVdZei9jeUdob3NGWU9GTlB6MlRwVnZxSE9XSUROVlIKd2dkVmhFQUYzY0JYRjROeEE0bXhHL21iOGlCbUpxdnNNRGxEUHZRb0xxc1lOd0ZBTkxkNHIvdk82QUJWTGh2RgpNU1QxVXZGTGkyVGt5NWJ3VXBLNFZQelhuOGVwYjdxTnJvSExwZWpKVmg4a05TdVZOSXVJbHBFdk9BS0pQeGllCjg5QmJkOVpNZHVHTVpZNnRtaS9MNEg0bDdPQlNORWNnOWR5QXV2azlQTVFWblNJQzBac21PUjhVc2FnZlBxdEEKM0JiUkdSbDI3MW16eEc1VkhrWT0KLS0tLS1FTkQgQ0VSVElGSUNBVEUtLS0tLQo=

server: https://10.9.12.100:6443

name: kubernetes

contexts: null

current-context: ""

kind: Config

preferences: {}

users: null

```

配置 admin

```shell

[root@xingdiancloud-native-master-a k8s-work]#v kubectl config set-credentials admin --client-certificate=admin.pem --client-key=admin-key.pem --embed-certs=true --kubeconfig=kube.config

User "admin" set.

[root@xingdiancloud-native-master-a k8s-work]# cat kube.config

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSURtakNDQW9LZ0F3SUJBZ0lVTzFVN3NvbE1URUxNb2lBU2VyNlV0RlFDbjZzd0RRWUpLb1pJaHZjTkFRRUwKQlFBd1pURUxNQWtHQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbAphV3BwYm1jeEVEQU9CZ05WQkFvVEIydDFZbVZ0YzJJeEN6QUpCZ05WQkFzVEFrTk9NUk13RVFZRFZRUURFd3ByCmRXSmxjbTVsZEdWek1CNFhEVEkwTURFd05EQXhNVGd3TUZvWERUTTBNREV3TVRBeE1UZ3dNRm93WlRFTE1Ba0cKQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbGFXcHBibWN4RURBTwpCZ05WQkFvVEIydDFZbVZ0YzJJeEN6QUpCZ05WQkFzVEFrTk9NUk13RVFZRFZRUURFd3ByZFdKbGNtNWxkR1Z6Ck1JSUJJakFOQmdrcWhraUc5dzBCQVFFRkFBT0NBUThBTUlJQkNnS0NBUUVBcjJxTGlPc0NpK2RZbnEwK1lha2EKUHZoZTdFMFY0c3BUd1ZkMWNSVGU3eTY1ekdXY2tKOXVCKzNKWjl3bGhkL3d5Y0NuaTN0d2RoVVh2bW1RRTdJcwphVkJhenpzRjFGcDE0MFdkbjVZcGZEd0V4OWR3QitaVE83SnZncXFBUTlnTlRyaGZ3UkpVeUVIZDcvaUY2NE5RCkhlTTJmQm1QUHpXeWwvdkc1bVB3UVBvL1krcnMxckZ6c2JVRG1EYmJ5enQxNjZXblVlVWozYzB3aFp1Y2hOb1oKSDFwbjZXTXFIdWxhM2FuaElQWGJVa0VXK1FVVzZLU1FnVm1MVzZZbVlEZGROYisxWkhxb1FKQUI5YUNRRlgrZgpnTXQzSnBIQXJPY3hWTG1leStMYzQ5RGhtQVZDZVcxQkZOeEhpTVZoZGo1czljWjQyUlgvV1diWlZpWjVGeGsyCldRSURBUUFCbzBJd1FEQU9CZ05WSFE4QkFmOEVCQU1DQVFZd0R3WURWUjBUQVFIL0JBVXdBd0VCL3pBZEJnTlYKSFE0RUZnUVVLYzZIV3RTRlQ3R3BFTFJtVUhaeldxUHdhRG93RFFZSktvWklodmNOQVFFTEJRQURnZ0VCQUYxZQpUY21oZEdzcUI5YmwxVjFVeE0wczg4dm05VjJ1dnhIOEhEVmtIdjJ4eUxucEFwVWpHQldPZ3JRdSsyMXErdE1lCkVNa2hRUzlFOGRQTkQzSkgxbmdob3lyN0dad3k0SFVmQkxoOXdNTVNHd3plaWJJd3lXRUtQMUlBbmlIbmFxUW4KTDBUMlVzZk81c0x6MXRiRHlKWXZQbXA4aHVJTkRCdFUrSmtGY3huM21lanluSFpVeEJEZExURUtqWlNib1VzVAo2NXArMzNzalhlc2wwQVZEMlg0UUo5NytWa0I4ZXNFcnZQaUVRdmJyWG9OQTVROVNZNk5xZ1JtUGo4VjNZTEdSCldXVjB3SGphT0dCUWx1OUlqbnBIWGs2U2JmTmpCd25vS01iWEFOcWVUWDdNSXgwOWd6QmphUTJWWUlnSFh3VloKbGY3azIxMDBkR0JNajRMd3hwND0KLS0tLS1FTkQgQ0VSVElGSUNBVEUtLS0tLQo=

server: https://10.9.12.100:6443

name: kubernetes

contexts: null

current-context: ""

kind: Config

preferences: {}

users:

- name: admin

user:

client-certificate-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUQzVENDQXNXZ0F3SUJBZ0lVWUpLNkpBSXgvMlRXZFR0cGhOejd4WmxscVBFd0RRWUpLb1pJaHZjTkFRRUwKQlFBd1pURUxNQWtHQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbAphV3BwYm1jeEVEQU9CZ05WQkFvVEIydDFZbVZ0YzJJeEN6QUpCZ05WQkFzVEFrTk9NUk13RVFZRFZRUURFd3ByCmRXSmxjbTVsZEdWek1CNFhEVEkwTURFd05EQTNNamt3TUZvWERUTTBNREV3TVRBM01qa3dNRm93YXpFTE1Ba0cKQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbGFXcHBibWN4RnpBVgpCZ05WQkFvVERuTjVjM1JsYlRwdFlYTjBaWEp6TVE4d0RRWURWUVFMRXdaemVYTjBaVzB4RGpBTUJnTlZCQU1UCkJXRmtiV2x1TUlJQklqQU5CZ2txaGtpRzl3MEJBUUVGQUFPQ0FROEFNSUlCQ2dLQ0FRRUF0RkVvOTNENnlXSysKMXpDRnhhMmwxUUNaUURxWnphWEU4YW9EQktEOHFES0V6YllUaE5PbVVBS1JiUUsrN2l1aXlVdTdRY3pqektXeApMSnhHSlpvRms3N29tbENMOVNlWURJY0EwM3l4cnlOTXR6eS92dkVkY0VtUGJuT1FaRytoMVBQQzRIZHJRdjh0CkNZdG1wcnhpSzdiRXZ4dDVWTEZXS2lDRnFMZHRHL3VGd0VLclZRc2RhQlVwNktkOHpoeFRTOGw2REZuaHVSU0gKZkVSK1cyeC8xUXF4Ri8zdkx2dzR0QzFkMnYwSFBqODB5aFozdXdNQWY0VEdKMXdFSmRCQ0VMSUFkUE9Jd1g1egpCTW44R0dPdkh1T2hmaGFOZ0pLUCtKbEUrZmlkMnFYZUoyNHBjM2Y2VGk2NGlyZno5SjhJbGpsMTNSZ1pyNWZlCjQrNTJ6VmZlYlFJREFRQUJvMzh3ZlRBT0JnTlZIUThCQWY4RUJBTUNCYUF3SFFZRFZSMGxCQll3RkFZSUt3WUIKQlFVSEF3RUdDQ3NHQVFVRkJ3TUNNQXdHQTFVZEV3RUIvd1FDTUFBd0hRWURWUjBPQkJZRUZHcGlWY2RYT0FLdgpzTFlvS0QyMGlQK1o5Z0dHTUI4R0ExVWRJd1FZTUJhQUZDbk9oMXJVaFUreHFSQzBabEIyYzFxajhHZzZNQTBHCkNTcUdTSWIzRFFFQkN3VUFBNElCQVFBYjVvT1VJdUJpY01jOW1hcTV4TExJY3I1Um5vcUIvbmxxU3krUFpaMHcKa2xrOEMwbzk0Q0FZb2VJRnhSNWlTcTBZdHRNbG5KRnJxSlcyR0ZENzZOeFRVeEtCSUtiL2llNVNMS3J6VVhXNApacG9mKzFHaUx6dnZRMENIYTZRQkIySkhpZjdSN0Y3RFY3b29JM3REdWNvNkZKcHpscmZNTHVoNHdwTkkyaGlOCk93cHk3Qm9TQVNOR2ZSRWRYOHJvZnRlVEF3RVpOM0txUEtianBETlBOS2ZSZzliRzhReElhMkJ6L0NVYkE0Q2oKR2tEZy9uQnNuMmJoejBlU1ZIdzJYL0tjUUhaSUhHSlBJajhjQ2NHK0tvMFhobll1cTEwVzk0cnppUE5JOHZZYwo4ZEVTQ2ZLT0dBdURmQWxRbTQyejUzaktYMWovMCs2dzluSG5ZKytXa3VaYQotLS0tLUVORCBDRVJUSUZJQ0FURS0tLS0tCg==

client-key-data: LS0tLS1CRUdJTiBSU0EgUFJJVkFURSBLRVktLS0tLQpNSUlFcFFJQkFBS0NBUUVBdEZFbzkzRDZ5V0srMXpDRnhhMmwxUUNaUURxWnphWEU4YW9EQktEOHFES0V6YllUCmhOT21VQUtSYlFLKzdpdWl5VXU3UWN6anpLV3hMSnhHSlpvRms3N29tbENMOVNlWURJY0EwM3l4cnlOTXR6eS8KdnZFZGNFbVBibk9RWkcraDFQUEM0SGRyUXY4dENZdG1wcnhpSzdiRXZ4dDVWTEZXS2lDRnFMZHRHL3VGd0VLcgpWUXNkYUJVcDZLZDh6aHhUUzhsNkRGbmh1UlNIZkVSK1cyeC8xUXF4Ri8zdkx2dzR0QzFkMnYwSFBqODB5aFozCnV3TUFmNFRHSjF3RUpkQkNFTElBZFBPSXdYNXpCTW44R0dPdkh1T2hmaGFOZ0pLUCtKbEUrZmlkMnFYZUoyNHAKYzNmNlRpNjRpcmZ6OUo4SWxqbDEzUmdacjVmZTQrNTJ6VmZlYlFJREFRQUJBb0lCQVFDY3UzTDFhWkhEWEg1dgpRM0R6ZTFXS2lLT3NyWU1rdW5NdWI4MkJ4NEQxbmp2TEp2bGVXaTNVbS9iV0h5M2dqYk5JYnZoTVlKQ2RRR1I1ClZ6aXQxR3dHbVVsTFlMbldsTnpYL3J6Y0Z5WEhDdExTN3czb0pXS21TSHBRMGtodTFJMkJNWVJ4WWJ1dEYycUoKUWs4dW5NNWtHdEIzSUtWYzFXd0U0Qkh0cmNvOEovVGxkRVRkM3I0cTlKOHFEbXlQTjFRVUlDZ0VzSkdtV2RoNwplQkNpWEdSck9YR3VEcTFuQjB1ZjlLOEVkVE83MEU5ME9GUzFHdW1kbWhKOEFidGRYN1dRZU8wY25FRGRmRDVwCjZvS09nbXU2Q0xIU09ZZENiWndUUWZjUXlkU0JLUTdWOXpQNk85TFlBSHVDZlRkNVE5STg1elcvN0FrblB3YVkKU0JWMlhMdmhBb0dCQU9nRG1QNk51bTdJY0ZPY1oyT3MrL1AwZ1BoR0Q4djlCY25QSnZ0UHJDMmZsNXVhcmF2TQpSbkhXS2w2Wi9DYll1azljQ0dGdXdNMnBEYm1GakU2UUlxbjNNSXpQRGZ1czBBcFFmNWk1b0duTXh5eVlqTGxICkxzK3R4VDA5Znk0NzYyOENwOVRuRlJENzBxZXdSSDdKS3RXL3FlZldzaEprQzBoQzd1TEsrSDA1QW9HQkFNYjEKWGYvTXhLUHJsOTN1R21yMUlYMTNjend3dXpsWVpyUFdaUVBvdVpsZmIvODdwNTZxRHRjWkwrajlkVEswTU5BQQpERFJXbG1VSzBrYmpKTndXdEdPcTN0ZmFRU1pnV2I0amhHR0Y1cWp0alVMSHNUZDB3OG8yREVESGpwZW5DMG85CktkWWQrRkZ6NDBkVVhwK3RHODNZa25JcnNNeVRUY3BGdllwSVF4N1ZBb0dCQUtxZHlxcVhDdHhnNWNsMm9NazUKOG1ZcURaV0Y0Q1FBUTN0dXJKbnVzdzB4NlVseWEvaUVWZUZzdnVlbWtUajM4N3BjVVlWazdyL09hOXRjREJ2Ugovc3ZDalo5ZXZFZXhnNk95SXNMcTdyNGU2dkV1bFgzQ2pQZ0lMNTJqVloxb1R1L3BvZ1g4a1E5V1FFazBaSXBmCjRQSWk2ZzBsWXZvSFBBeTl1L0pubEdoeEFvR0JBTGhhWjIwOUdnQWhyeWpQRmQrQm9EU1gyRWt2aG13T2c2dWoKdnhvdUxMdjIrTm54TnRJSUZaUXVISHl4VGtWYlBkZWVFN0R6Z292QnlUSXlDdGQ4bWsyMzZLRHQ5V3hQM3hnVgo1UFpRa25oNUZXbUppNll0SmJaYSttT1VCWVowSER3QURLSUFSeldDUWxpM3pxMzZRMGNycEJieWNQSStrOWdYCll4ZWMrY1M1QW9HQUUyN1R0by9MNVpxMFpNOUFkM3ZZSUhsRmM4TWdMU3lRbDhaZENsd25LUWNiVzdNVkYxWGwKV1duWEtMU2k0SEVmcmNxMlpPSnM3UHlMMDJrWXgwV0FDZnp1VmtzQ1BvRUZScUdJMFdkWnBPaXhkTWdlZDYrYgpDSnd3Rzd6MFFTOHg5aWhhWllpdUY2VWhFME8zNjFRSVhqMUVZb1pWQzU3Uy82OTR6cVpDNzhNPQotLS0tLUVORCBSU0EgUFJJVkFURSBLRVktLS0tLQo=

```

创建上下文

```shell

[root@xingdiancloud-native-master-a k8s-work]# kubectl config set-context kubernetes --cluster=kubernetes --user=admin --kubeconfig=kube.config

Context "kubernetes" created.

[root@xingdiancloud-native-master-a k8s-work]# cat kube.config

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSURtakNDQW9LZ0F3SUJBZ0lVTzFVN3NvbE1URUxNb2lBU2VyNlV0RlFDbjZzd0RRWUpLb1pJaHZjTkFRRUwKQlFBd1pURUxNQWtHQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbAphV3BwYm1jeEVEQU9CZ05WQkFvVEIydDFZbVZ0YzJJeEN6QUpCZ05WQkFzVEFrTk9NUk13RVFZRFZRUURFd3ByCmRXSmxjbTVsZEdWek1CNFhEVEkwTURFd05EQXhNVGd3TUZvWERUTTBNREV3TVRBeE1UZ3dNRm93WlRFTE1Ba0cKQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbGFXcHBibWN4RURBTwpCZ05WQkFvVEIydDFZbVZ0YzJJeEN6QUpCZ05WQkFzVEFrTk9NUk13RVFZRFZRUURFd3ByZFdKbGNtNWxkR1Z6Ck1JSUJJakFOQmdrcWhraUc5dzBCQVFFRkFBT0NBUThBTUlJQkNnS0NBUUVBcjJxTGlPc0NpK2RZbnEwK1lha2EKUHZoZTdFMFY0c3BUd1ZkMWNSVGU3eTY1ekdXY2tKOXVCKzNKWjl3bGhkL3d5Y0NuaTN0d2RoVVh2bW1RRTdJcwphVkJhenpzRjFGcDE0MFdkbjVZcGZEd0V4OWR3QitaVE83SnZncXFBUTlnTlRyaGZ3UkpVeUVIZDcvaUY2NE5RCkhlTTJmQm1QUHpXeWwvdkc1bVB3UVBvL1krcnMxckZ6c2JVRG1EYmJ5enQxNjZXblVlVWozYzB3aFp1Y2hOb1oKSDFwbjZXTXFIdWxhM2FuaElQWGJVa0VXK1FVVzZLU1FnVm1MVzZZbVlEZGROYisxWkhxb1FKQUI5YUNRRlgrZgpnTXQzSnBIQXJPY3hWTG1leStMYzQ5RGhtQVZDZVcxQkZOeEhpTVZoZGo1czljWjQyUlgvV1diWlZpWjVGeGsyCldRSURBUUFCbzBJd1FEQU9CZ05WSFE4QkFmOEVCQU1DQVFZd0R3WURWUjBUQVFIL0JBVXdBd0VCL3pBZEJnTlYKSFE0RUZnUVVLYzZIV3RTRlQ3R3BFTFJtVUhaeldxUHdhRG93RFFZSktvWklodmNOQVFFTEJRQURnZ0VCQUYxZQpUY21oZEdzcUI5YmwxVjFVeE0wczg4dm05VjJ1dnhIOEhEVmtIdjJ4eUxucEFwVWpHQldPZ3JRdSsyMXErdE1lCkVNa2hRUzlFOGRQTkQzSkgxbmdob3lyN0dad3k0SFVmQkxoOXdNTVNHd3plaWJJd3lXRUtQMUlBbmlIbmFxUW4KTDBUMlVzZk81c0x6MXRiRHlKWXZQbXA4aHVJTkRCdFUrSmtGY3huM21lanluSFpVeEJEZExURUtqWlNib1VzVAo2NXArMzNzalhlc2wwQVZEMlg0UUo5NytWa0I4ZXNFcnZQaUVRdmJyWG9OQTVROVNZNk5xZ1JtUGo4VjNZTEdSCldXVjB3SGphT0dCUWx1OUlqbnBIWGs2U2JmTmpCd25vS01iWEFOcWVUWDdNSXgwOWd6QmphUTJWWUlnSFh3VloKbGY3azIxMDBkR0JNajRMd3hwND0KLS0tLS1FTkQgQ0VSVElGSUNBVEUtLS0tLQo=

server: https://10.9.12.100:6443

name: kubernetes

contexts:

- context:

cluster: kubernetes

user: admin

name: kubernetes

current-context: ""

kind: Config

preferences: {}

users:

- name: admin

user:

client-certificate-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUQzVENDQXNXZ0F3SUJBZ0lVWUpLNkpBSXgvMlRXZFR0cGhOejd4WmxscVBFd0RRWUpLb1pJaHZjTkFRRUwKQlFBd1pURUxNQWtHQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbAphV3BwYm1jeEVEQU9CZ05WQkFvVEIydDFZbVZ0YzJJeEN6QUpCZ05WQkFzVEFrTk9NUk13RVFZRFZRUURFd3ByCmRXSmxjbTVsZEdWek1CNFhEVEkwTURFd05EQTNNamt3TUZvWERUTTBNREV3TVRBM01qa3dNRm93YXpFTE1Ba0cKQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbGFXcHBibWN4RnpBVgpCZ05WQkFvVERuTjVjM1JsYlRwdFlYTjBaWEp6TVE4d0RRWURWUVFMRXdaemVYTjBaVzB4RGpBTUJnTlZCQU1UCkJXRmtiV2x1TUlJQklqQU5CZ2txaGtpRzl3MEJBUUVGQUFPQ0FROEFNSUlCQ2dLQ0FRRUF0RkVvOTNENnlXSysKMXpDRnhhMmwxUUNaUURxWnphWEU4YW9EQktEOHFES0V6YllUaE5PbVVBS1JiUUsrN2l1aXlVdTdRY3pqektXeApMSnhHSlpvRms3N29tbENMOVNlWURJY0EwM3l4cnlOTXR6eS92dkVkY0VtUGJuT1FaRytoMVBQQzRIZHJRdjh0CkNZdG1wcnhpSzdiRXZ4dDVWTEZXS2lDRnFMZHRHL3VGd0VLclZRc2RhQlVwNktkOHpoeFRTOGw2REZuaHVSU0gKZkVSK1cyeC8xUXF4Ri8zdkx2dzR0QzFkMnYwSFBqODB5aFozdXdNQWY0VEdKMXdFSmRCQ0VMSUFkUE9Jd1g1egpCTW44R0dPdkh1T2hmaGFOZ0pLUCtKbEUrZmlkMnFYZUoyNHBjM2Y2VGk2NGlyZno5SjhJbGpsMTNSZ1pyNWZlCjQrNTJ6VmZlYlFJREFRQUJvMzh3ZlRBT0JnTlZIUThCQWY4RUJBTUNCYUF3SFFZRFZSMGxCQll3RkFZSUt3WUIKQlFVSEF3RUdDQ3NHQVFVRkJ3TUNNQXdHQTFVZEV3RUIvd1FDTUFBd0hRWURWUjBPQkJZRUZHcGlWY2RYT0FLdgpzTFlvS0QyMGlQK1o5Z0dHTUI4R0ExVWRJd1FZTUJhQUZDbk9oMXJVaFUreHFSQzBabEIyYzFxajhHZzZNQTBHCkNTcUdTSWIzRFFFQkN3VUFBNElCQVFBYjVvT1VJdUJpY01jOW1hcTV4TExJY3I1Um5vcUIvbmxxU3krUFpaMHcKa2xrOEMwbzk0Q0FZb2VJRnhSNWlTcTBZdHRNbG5KRnJxSlcyR0ZENzZOeFRVeEtCSUtiL2llNVNMS3J6VVhXNApacG9mKzFHaUx6dnZRMENIYTZRQkIySkhpZjdSN0Y3RFY3b29JM3REdWNvNkZKcHpscmZNTHVoNHdwTkkyaGlOCk93cHk3Qm9TQVNOR2ZSRWRYOHJvZnRlVEF3RVpOM0txUEtianBETlBOS2ZSZzliRzhReElhMkJ6L0NVYkE0Q2oKR2tEZy9uQnNuMmJoejBlU1ZIdzJYL0tjUUhaSUhHSlBJajhjQ2NHK0tvMFhobll1cTEwVzk0cnppUE5JOHZZYwo4ZEVTQ2ZLT0dBdURmQWxRbTQyejUzaktYMWovMCs2dzluSG5ZKytXa3VaYQotLS0tLUVORCBDRVJUSUZJQ0FURS0tLS0tCg==

client-key-data: LS0tLS1CRUdJTiBSU0EgUFJJVkFURSBLRVktLS0tLQpNSUlFcFFJQkFBS0NBUUVBdEZFbzkzRDZ5V0srMXpDRnhhMmwxUUNaUURxWnphWEU4YW9EQktEOHFES0V6YllUCmhOT21VQUtSYlFLKzdpdWl5VXU3UWN6anpLV3hMSnhHSlpvRms3N29tbENMOVNlWURJY0EwM3l4cnlOTXR6eS8KdnZFZGNFbVBibk9RWkcraDFQUEM0SGRyUXY4dENZdG1wcnhpSzdiRXZ4dDVWTEZXS2lDRnFMZHRHL3VGd0VLcgpWUXNkYUJVcDZLZDh6aHhUUzhsNkRGbmh1UlNIZkVSK1cyeC8xUXF4Ri8zdkx2dzR0QzFkMnYwSFBqODB5aFozCnV3TUFmNFRHSjF3RUpkQkNFTElBZFBPSXdYNXpCTW44R0dPdkh1T2hmaGFOZ0pLUCtKbEUrZmlkMnFYZUoyNHAKYzNmNlRpNjRpcmZ6OUo4SWxqbDEzUmdacjVmZTQrNTJ6VmZlYlFJREFRQUJBb0lCQVFDY3UzTDFhWkhEWEg1dgpRM0R6ZTFXS2lLT3NyWU1rdW5NdWI4MkJ4NEQxbmp2TEp2bGVXaTNVbS9iV0h5M2dqYk5JYnZoTVlKQ2RRR1I1ClZ6aXQxR3dHbVVsTFlMbldsTnpYL3J6Y0Z5WEhDdExTN3czb0pXS21TSHBRMGtodTFJMkJNWVJ4WWJ1dEYycUoKUWs4dW5NNWtHdEIzSUtWYzFXd0U0Qkh0cmNvOEovVGxkRVRkM3I0cTlKOHFEbXlQTjFRVUlDZ0VzSkdtV2RoNwplQkNpWEdSck9YR3VEcTFuQjB1ZjlLOEVkVE83MEU5ME9GUzFHdW1kbWhKOEFidGRYN1dRZU8wY25FRGRmRDVwCjZvS09nbXU2Q0xIU09ZZENiWndUUWZjUXlkU0JLUTdWOXpQNk85TFlBSHVDZlRkNVE5STg1elcvN0FrblB3YVkKU0JWMlhMdmhBb0dCQU9nRG1QNk51bTdJY0ZPY1oyT3MrL1AwZ1BoR0Q4djlCY25QSnZ0UHJDMmZsNXVhcmF2TQpSbkhXS2w2Wi9DYll1azljQ0dGdXdNMnBEYm1GakU2UUlxbjNNSXpQRGZ1czBBcFFmNWk1b0duTXh5eVlqTGxICkxzK3R4VDA5Znk0NzYyOENwOVRuRlJENzBxZXdSSDdKS3RXL3FlZldzaEprQzBoQzd1TEsrSDA1QW9HQkFNYjEKWGYvTXhLUHJsOTN1R21yMUlYMTNjend3dXpsWVpyUFdaUVBvdVpsZmIvODdwNTZxRHRjWkwrajlkVEswTU5BQQpERFJXbG1VSzBrYmpKTndXdEdPcTN0ZmFRU1pnV2I0amhHR0Y1cWp0alVMSHNUZDB3OG8yREVESGpwZW5DMG85CktkWWQrRkZ6NDBkVVhwK3RHODNZa25JcnNNeVRUY3BGdllwSVF4N1ZBb0dCQUtxZHlxcVhDdHhnNWNsMm9NazUKOG1ZcURaV0Y0Q1FBUTN0dXJKbnVzdzB4NlVseWEvaUVWZUZzdnVlbWtUajM4N3BjVVlWazdyL09hOXRjREJ2Ugovc3ZDalo5ZXZFZXhnNk95SXNMcTdyNGU2dkV1bFgzQ2pQZ0lMNTJqVloxb1R1L3BvZ1g4a1E5V1FFazBaSXBmCjRQSWk2ZzBsWXZvSFBBeTl1L0pubEdoeEFvR0JBTGhhWjIwOUdnQWhyeWpQRmQrQm9EU1gyRWt2aG13T2c2dWoKdnhvdUxMdjIrTm54TnRJSUZaUXVISHl4VGtWYlBkZWVFN0R6Z292QnlUSXlDdGQ4bWsyMzZLRHQ5V3hQM3hnVgo1UFpRa25oNUZXbUppNll0SmJaYSttT1VCWVowSER3QURLSUFSeldDUWxpM3pxMzZRMGNycEJieWNQSStrOWdYCll4ZWMrY1M1QW9HQUUyN1R0by9MNVpxMFpNOUFkM3ZZSUhsRmM4TWdMU3lRbDhaZENsd25LUWNiVzdNVkYxWGwKV1duWEtMU2k0SEVmcmNxMlpPSnM3UHlMMDJrWXgwV0FDZnp1VmtzQ1BvRUZScUdJMFdkWnBPaXhkTWdlZDYrYgpDSnd3Rzd6MFFTOHg5aWhhWllpdUY2VWhFME8zNjFRSVhqMUVZb1pWQzU3Uy82OTR6cVpDNzhNPQotLS0tLUVORCBSU0EgUFJJVkFURSBLRVktLS0tLQo=

```

##### 6.5 准备kubectl配置文件并进行角色绑定

```shell

[root@xingdiancloud-native-master-a k8s-work]# mkdir ~/.kube

[root@xingdiancloud-native-master-a k8s-work]# cp kube.config ~/.kube/config

[root@xingdiancloud-native-master-a k8s-work]# kubectl create clusterrolebinding kube-apiserver:kubelet-apis --clusterrole=system:kubelet-api-admin --user kubernetes --kubeconfig=/root/.kube/config

```

##### 6.6 查看集群状态

查看集群信息

```shell

[root@xingdiancloud-native-master-a k8s-work]# kubectl cluster-info

```

查看集群组件状态

```shell

[root@xingdiancloud-native-master-a k8s-work]# kubectl get componentstatuses

```

查看命名空间中资源对象

```shell

[root@xingdiancloud-native-master-a k8s-work]# kubectl get all --all-namespaces

```

##### 6.7 同步kubectl配置文件到集群其它master节点

```shell

xingdiancloud-native-master-b 节点上,创建文件夹

[root@xingdiancloud-native-master-b ~]# mkdir /root/.kube

把配置文件同步过去

[root@xingdiancloud-native-master-a ~]# scp /root/.kube/config xingdiancloud-native-master-b:/root/.kube/config

```

#### 7.部署kube-controller-manager

##### 7.1 创建kube-controller-manager证书请求文件

```shell

[root@xingdiancloud-native-master-a ~]# cat > kube-controller-manager-csr.json << "EOF"

{

"CN": "system:kube-controller-manager",

"key": {

"algo": "rsa",

"size": 2048

},

"hosts": [

"127.0.0.1",

"10.9.12.60",

"10.9.12.64"

],

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:kube-controller-manager",

"OU": "system"

}

]

}

EOF

```

##### 7.2 创建kube-controller-manager证书文件

```shell

[root@xingdiancloud-native-master-a ~]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-controller-manager-csr.json | cfssljson -bare kube-controller-manager

```

注意:

kube-controller-manager.csr

kube-controller-manager-csr.json

kube-controller-manager-key.pem

kube-controller-manager.pem

##### 7.3 创建kube-controller-manager的kube-controller-manager.kubeconfig

```shell

[root@xingdiancloud-native-master-a ~]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://10.9.12.100:6443 --kubeconfig=kube-controller-manager.kubeconfig

[root@xingdiancloud-native-master-a ~]# kubectl config set-credentials system:kube-controller-manager --client-certificate=kube-controller-manager.pem --client-key=kube-controller-manager-key.pem --embed-certs=true --kubeconfig=kube-controller-manager.kubeconfig

[root@xingdiancloud-native-master-a ~]# kubectl config set-context system:kube-controller-manager --cluster=kubernetes --user=system:kube-controller-manager --kubeconfig=kube-controller-manager.kubeconfig

[root@xingdiancloud-native-master-a ~]# kubectl config use-context system:kube-controller-manager --kubeconfig=kube-controller-manager.kubeconfig

```

##### 7.4 创建kube-controller-manager配置文件

```shell

[root@xingdiancloud-native-master-a ~]# cat > kube-controller-manager.conf << "EOF"

KUBE_CONTROLLER_MANAGER_OPTS=" \

--secure-port=10257 \

--bind-address=127.0.0.1 \

--kubeconfig=/etc/kubernetes/kube-controller-manager.kubeconfig \

--service-cluster-ip-range=10.96.0.0/16 \

--cluster-name=kubernetes \

--cluster-signing-cert-file=/etc/kubernetes/ssl/ca.pem \

--cluster-signing-key-file=/etc/kubernetes/ssl/ca-key.pem \

--allocate-node-cidrs=true \

--cluster-cidr=10.244.0.0/16 \

--root-ca-file=/etc/kubernetes/ssl/ca.pem \

--service-account-private-key-file=/etc/kubernetes/ssl/ca-key.pem \

--leader-elect=true \

--feature-gates=RotateKubeletServerCertificate=true \

--controllers=*,bootstrapsigner,tokencleaner \

--tls-cert-file=/etc/kubernetes/ssl/kube-controller-manager.pem \

--tls-private-key-file=/etc/kubernetes/ssl/kube-controller-manager-key.pem \

--use-service-account-credentials=true \

--v=2"

EOF

```

##### 7.5 创建服务启动文件

```shell

[root@xingdiancloud-native-master-a ~]# cat > kube-controller-manager.service << "EOF"

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/etc/kubernetes/kube-controller-manager.conf

ExecStart=/usr/local/bin/kube-controller-manager $KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

EOF

```

##### 7.6 同步文件到集群master节点

内部拷贝

```shell

[root@xingdiancloud-native-master-a ~]# cp kube-controller-manager*.pem /etc/kubernetes/ssl/

[root@xingdiancloud-native-master-a ~]# cp kube-controller-manager.kubeconfig /etc/kubernetes/

[root@xingdiancloud-native-master-a ~]# cp kube-controller-manager.conf /etc/kubernetes/

[root@xingdiancloud-native-master-a ~]# cp kube-controller-manager.service /usr/lib/systemd/system/

```

远程拷贝

```shell

[root@xingdiancloud-native-master-a ~]# scp kube-controller-manager*.pem xingdiancloud-native-master-b:/etc/kubernetes/ssl/

[root@xingdiancloud-native-master-a ~]# scp kube-controller-manager.kubeconfig kube-controller-manager.conf xingdiancloud-native-master-b:/etc/kubernetes/

[root@xingdiancloud-native-master-a ~]# scp kube-controller-manager.service xingdiancloud-native-master-b:/usr/lib/systemd/system/

```

#### 8.部署kube-scheduler

##### 8.1 创建kube-scheduler证书请求文件

```shell

[root@xingdiancloud-native-master-a ~]# cat > kube-scheduler-csr.json << "EOF"

{

"CN": "system:kube-scheduler",

"hosts": [

"127.0.0.1",

"10.9.12.60",

"10.9.12.64"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "system:kube-scheduler",

"OU": "system"

}

]

}

EOF

```

##### 8.2 生成kube-scheduler证书

```shell

[root@xingdiancloud-native-master-a ~]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-scheduler-csr.json | cfssljson -bare kube-scheduler

```

注意:

kube-scheduler.csr

kube-scheduler-csr.json

kube-scheduler-key.pem

kube-scheduler.pem

```shell

[root@xingdiancloud-native-master-a ~]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-scheduler-csr.json | cfssljson -bare kube-scheduler

```

##### 8.3 创建kube-scheduler的kubeconfig

```shell

[root@xingdiancloud-native-master-a ~]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://10.9.12.100:6443 --kubeconfig=kube-scheduler.kubeconfig

[root@xingdiancloud-native-master-a ~]# kubectl config set-credentials system:kube-scheduler --client-certificate=kube-scheduler.pem --client-key=kube-scheduler-key.pem --embed-certs=true --kubeconfig=kube-scheduler.kubeconfig

[root@xingdiancloud-native-master-a ~]# kubectl config set-context system:kube-scheduler --cluster=kubernetes --user=system:kube-scheduler --kubeconfig=kube-scheduler.kubeconfig

[root@xingdiancloud-native-master-a ~]# kubectl config use-context system:kube-scheduler --kubeconfig=kube-scheduler.kubeconfig

```

##### 8.4 创建服务配置文件

```shell

[root@xingdiancloud-native-master-a ~]# cat > kube-scheduler.conf << "EOF"

KUBE_SCHEDULER_OPTS=" \

--kubeconfig=/etc/kubernetes/kube-scheduler.kubeconfig \

--leader-elect=true \

--v=2"

EOF

```

##### 8.5 创建服务启动配置文件

```shell

[root@xingdiancloud-native-master-a ~]# cat > kube-scheduler.service << "EOF"

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/etc/kubernetes/kube-scheduler.conf

ExecStart=/usr/local/bin/kube-scheduler $KUBE_SCHEDULER_OPTS

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

EOF

```

##### 8.6 同步文件至集群master节点

内部拷贝

```shell

[root@xingdiancloud-native-master-a ~]# cp kube-scheduler*.pem /etc/kubernetes/ssl/

[root@xingdiancloud-native-master-a ~]# cp kube-scheduler.kubeconfig /etc/kubernetes/

[root@xingdiancloud-native-master-a ~]# cp kube-scheduler.conf /etc/kubernetes/

[root@xingdiancloud-native-master-a ~]# cp kube-scheduler.service /usr/lib/systemd/system/

```

外部拷贝

```shell

[root@xingdiancloud-native-master-a ~]# scp kube-scheduler*.pem xingdiancloud-native-master-b:/etc/kubernetes/ssl/

[root@xingdiancloud-native-master-a ~]# scp kube-scheduler.kubeconfig kube-scheduler.conf xingdiancloud-native-master-b:/etc/kubernetes/

[root@xingdiancloud-native-master-a ~]# scp kube-scheduler.service xingdiancloud-native-master-b:/usr/lib/systemd/system/

```

##### 8.7 启动服务

注意:所有master节点

```

[root@xingdiancloud-native-master-a ~]# systemctl daemon-reload

[root@xingdiancloud-native-master-a ~]# systemctl enable --now kube-scheduler

[root@xingdiancloud-native-master-a ~]# systemctl status kube-scheduler

[root@xingdiancloud-native-master-a ~]# systemctl status kube-scheduler

● kube-scheduler.service - Kubernetes Scheduler

Loaded: loaded (/usr/lib/systemd/system/kube-scheduler.service; enabled; vendor preset: disabled)

Active: active (running) since Thu 2024-01-04 16:45:18 CST; 1min 9s ago

Docs: https://github.com/kubernetes/kubernetes

Main PID: 6131 (kube-scheduler)

CGroup: /system.slice/kube-scheduler.service

└─6131 /usr/local/bin/kube-scheduler --kubeconfig=/etc/kubernetes/kube-scheduler.kubeconfig --leader-elect=true --v=2

Jan 04 16:45:19 k8s-master1 kube-scheduler[6131]: schedulerName: default-scheduler

Jan 04 16:45:19 k8s-master1 kube-scheduler[6131]: >

Jan 04 16:45:19 k8s-master1 kube-scheduler[6131]: I0104 16:45:19.437013 6131 server.go:154] "Starting Kubernetes Scheduler" version="v1.28.0"

Jan 04 16:45:19 k8s-master1 kube-scheduler[6131]: I0104 16:45:19.437027 6131 server.go:156] "Golang settings" GOGC="" GOMAXPROCS="" GOTRACEBACK=""

Jan 04 16:45:19 k8s-master1 kube-scheduler[6131]: I0104 16:45:19.438927 6131 tlsconfig.go:200] "Loaded serving cert" certName="Generated self signed cert" cer...

Jan 04 16:45:19 k8s-master1 kube-scheduler[6131]: I0104 16:45:19.439276 6131 named_certificates.go:53] "Loaded SNI cert" index=0 certName="self-signed loopbac...

Jan 04 16:45:19 k8s-master1 kube-scheduler[6131]: I0104 16:45:19.439311 6131 secure_serving.go:210] Serving securely on [::]:10259

Jan 04 16:45:19 k8s-master1 kube-scheduler[6131]: I0104 16:45:19.439359 6131 tlsconfig.go:240] "Starting DynamicServingCertificateController"

Jan 04 16:45:19 k8s-master1 kube-scheduler[6131]: I0104 16:45:19.540853 6131 leaderelection.go:250] attempting to acquire leader lease kube-system/ku...eduler...

Jan 04 16:45:19 k8s-master1 kube-scheduler[6131]: I0104 16:45:19.555700 6131 leaderelection.go:260] successfully acquired lease kube-system/kube-scheduler

Hint: Some lines were ellipsized, use -l to show in full.

```

```shell

[root@xingdiancloud-native-master-a ~]# kubectl get cs

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-0 Healthy ok

```

#### 9.工作节点(worker node)部署

##### 9.1 容器运行时docker部署

注意:

所有worker节点

```shell

[root@xingdiancloud-native-node-a ~]# wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo -O /etc/yum.repos.d/docker-ce.repo

[root@xingdiancloud-native-node-a ~]# yum -y install docker-ce

[root@xingdiancloud-native-node-a ~]# systemctl enable --now docker

[root@xingdiancloud-native-node-a ~]# cat << EOF | sudo tee /etc/docker/daemon.json

{

"exec-opts": ["native.cgroupdriver=systemd"]

}

EOF

[root@xingdiancloud-native-node-a ~]# systemctl restart docker

```

##### 9.2 cri-dockerd安装

注意:

cri-dockerd是docker容器的接口

所有worker节点都安装

```shell

[root@xingdiancloud-native-node-a ~]# wget https://github.com/Mirantis/cri-dockerd/releases/download/v0.3.9/cri-dockerd-0.3.9-3.el7.x86_64.rpm

[root@xingdiancloud-native-node-a ~]# yum install -y cri-dockerd-0.3.9-3.el7.x86_64.rpm

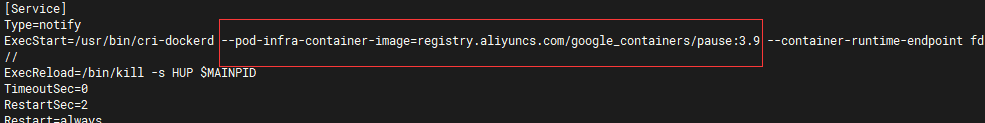

[root@xingdiancloud-native-node-a ~]# vi /usr/lib/systemd/system/cri-docker.service

#修改第10行内容,默认启动的pod镜像太低,指定到3.9版本。使用阿里云的镜像仓库,国内下载镜像会比较快

ExecStart=/usr/bin/cri-dockerd --pod-infra-container-image=registry.aliyuncs.com/google_containers/pause:3.9 --container-runtime-endpoint fd://

```

```shell

[root@xingdiancloud-native-node-a ~]# systemctl enable --now cri-docker

Created symlink from /etc/systemd/system/multi-user.target.wants/cri-docker.service to /usr/lib/systemd/system/cri-docker.service.

[root@xingdiancloud-native-node-a ~]# systemctl status cri-docker

● cri-docker.service - CRI Interface for Docker Application Container Engine

Loaded: loaded (/usr/lib/systemd/system/cri-docker.service; enabled; vendor preset: disabled)

Active: active (running) since Fri 2024-01-05 08:29:57 CST; 3s ago

Docs: https://docs.mirantis.com

Main PID: 1821 (cri-dockerd)

Tasks: 7

Memory: 13.8M

CGroup: /system.slice/cri-docker.service

└─1821 /usr/bin/cri-dockerd --pod-infra-container-image=registry.k8s.io/pause:3.9 --container-runtime-endpoint fd://

Jan 05 08:29:57 k8s-node2 cri-dockerd[1821]: time="2024-01-05T08:29:57+08:00" level=info msg="Connecting to docker on the Endpoint unix:///var/run/docker.sock"

Jan 05 08:29:57 k8s-node2 cri-dockerd[1821]: time="2024-01-05T08:29:57+08:00" level=info msg="Start docker client with request timeout 0s"

Jan 05 08:29:57 k8s-node2 cri-dockerd[1821]: time="2024-01-05T08:29:57+08:00" level=info msg="Hairpin mode is set to none"

Jan 05 08:29:57 k8s-node2 cri-dockerd[1821]: time="2024-01-05T08:29:57+08:00" level=info msg="Loaded network plugin cni"

Jan 05 08:29:57 k8s-node2 cri-dockerd[1821]: time="2024-01-05T08:29:57+08:00" level=info msg="Docker cri networking managed by network plugin cni"

Jan 05 08:29:57 k8s-node2 cri-dockerd[1821]: time="2024-01-05T08:29:57+08:00" level=info msg="Setting cgroupDriver systemd"

Jan 05 08:29:57 k8s-node2 cri-dockerd[1821]: time="2024-01-05T08:29:57+08:00" level=info msg="Docker cri received runtime config &RuntimeConfig{NetworkC...idr:,},}"

Jan 05 08:29:57 k8s-node2 cri-dockerd[1821]: time="2024-01-05T08:29:57+08:00" level=info msg="Starting the GRPC backend for the Docker CRI interface."

Jan 05 08:29:57 k8s-node2 cri-dockerd[1821]: time="2024-01-05T08:29:57+08:00" level=info msg="Start cri-dockerd grpc backend"

Jan 05 08:29:57 k8s-node2 systemd[1]: Started CRI Interface for Docker Application Container Engine.

Hint: Some lines were ellipsized, use -l to show in full.

```

在run目录下可以看到cri-dockerd.sock ,这个就是后面kubelet调用docker的sock

```shell

[root@xingdiancloud-native-node-a run]# ll /run/cri-dockerd.sock

srw-rw---- 1 root docker 0 Jan 5 08:33 /run/cri-dockerd.sock

```

##### 9.3 部署kubelet

注意:

在xingdiancloud-native-master-a上执行

###### 9.3.1 创建kubelet-bootstrap.kubeconfig

```shell

[root@xingdiancloud-native-master-a k8s-work]# BOOTSTRAP_TOKEN=$(awk -F "," '{print $1}' /etc/kubernetes/token.csv)

[root@xingdiancloud-native-master-a k8s-work]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://10.9.12.100:6443 --kubeconfig=kubelet-bootstrap.kubeconfig

[root@xingdiancloud-native-master-a k8s-work]# kubectl config set-credentials kubelet-bootstrap --token=${BOOTSTRAP_TOKEN} --kubeconfig=kubelet-bootstrap.kubeconfig

[root@xingdiancloud-native-master-a k8s-work]# kubectl config set-context default --cluster=kubernetes --user=kubelet-bootstrap --kubeconfig=kubelet-bootstrap.kubeconfig

[root@xingdiancloud-native-master-a k8s-work]# kubectl config use-context default --kubeconfig=kubelet-bootstrap.kubeconfig

[root@xingdiancloud-native-master-a k8s-work]# kubectl create clusterrolebinding cluster-system-anonymous --clusterrole=cluster-admin --user=kubelet-bootstrap

[root@xingdiancloud-native-master-a k8s-work]# kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap --kubeconfig=kubelet-bootstrap.kubeconfig

```

###### 9.3.2 创建kubelet配置文件

注意:

所有worker节点操作

```shell

[root@xingdiancloud-native-node-a ~]# mkdir -p /etc/kubernetes/ssl

xingdiancloud-native-node-a 配置文件:

[root@xingdiancloud-native-node-a ~]# cat > /etc/kubernetes/kubelet.json << "EOF"

{

"kind": "KubeletConfiguration",

"apiVersion": "kubelet.config.k8s.io/v1beta1",

"authentication": {

"x509": {

"clientCAFile": "/etc/kubernetes/ssl/ca.pem"

},

"webhook": {

"enabled": true,

"cacheTTL": "2m0s"

},

"anonymous": {

"enabled": false

}

},

"authorization": {

"mode": "Webhook",

"webhook": {

"cacheAuthorizedTTL": "5m0s",

"cacheUnauthorizedTTL": "30s"

}

},

"address": "10.9.12.66",

"port": 10250,

"readOnlyPort": 10255,

"cgroupDriver": "systemd",

"hairpinMode": "promiscuous-bridge",

"serializeImagePulls": false,

"clusterDomain": "cluster.local.",

"clusterDNS": ["10.96.0.2"]

}

EOF

xingdiancloud-native-node-b 配置文件:

[root@xingdiancloud-native-node-b ~]# cat > /etc/kubernetes/kubelet.json << "EOF"

{

"kind": "KubeletConfiguration",

"apiVersion": "kubelet.config.k8s.io/v1beta1",

"authentication": {

"x509": {

"clientCAFile": "/etc/kubernetes/ssl/ca.pem"

},

"webhook": {

"enabled": true,

"cacheTTL": "2m0s"

},

"anonymous": {

"enabled": false

}

},

"authorization": {

"mode": "Webhook",

"webhook": {

"cacheAuthorizedTTL": "5m0s",

"cacheUnauthorizedTTL": "30s"

}

},

"address": "10.9.12.65",

"port": 10250,

"readOnlyPort": 10255,

"cgroupDriver": "systemd",

"hairpinMode": "promiscuous-bridge",

"serializeImagePulls": false,

"clusterDomain": "cluster.local.",

"clusterDNS": ["10.96.0.2"]

}

EOF

xingdiancloud-native-node-c 配置文件:

[root@xingdiancloud-native-node-c ~]# cat > /etc/kubernetes/kubelet.json << "EOF"

{

"kind": "KubeletConfiguration",

"apiVersion": "kubelet.config.k8s.io/v1beta1",

"authentication": {

"x509": {

"clientCAFile": "/etc/kubernetes/ssl/ca.pem"

},

"webhook": {

"enabled": true,

"cacheTTL": "2m0s"

},

"anonymous": {

"enabled": false

}

},

"authorization": {

"mode": "Webhook",

"webhook": {

"cacheAuthorizedTTL": "5m0s",

"cacheUnauthorizedTTL": "30s"

}

},

"address": "10.9.12.67",

"port": 10250,

"readOnlyPort": 10255,

"cgroupDriver": "systemd",

"hairpinMode": "promiscuous-bridge",

"serializeImagePulls": false,

"clusterDomain": "cluster.local.",

"clusterDNS": ["10.96.0.2"]

}

EOF

```

###### 9.3.3 创建kubelet服务启动管理文件

在worker节点上创建kubulet的工作目录,所有worker节点

```shell

[root@xingdiancloud-native-node-a ~]# mkdir /var/lib/kubelet

```

在worker节点上创建kubulet的配置文件,所有worker节点

```shell

[root@xingdiancloud-native-node-a ~]# cat > /usr/lib/systemd/system/kubelet.service << "EOF"

[Unit]

Description=Kubernetes Kubelet

Documentation=https://github.com/kubernetes/kubernetes

After=docker.service

Requires=docker.service

[Service]

WorkingDirectory=/var/lib/kubelet

ExecStart=/usr/local/bin/kubelet \

--bootstrap-kubeconfig=/etc/kubernetes/kubelet-bootstrap.kubeconfig \

--cert-dir=/etc/kubernetes/ssl \

--kubeconfig=/etc/kubernetes/kubelet.kubeconfig \

--config=/etc/kubernetes/kubelet.json \

--container-runtime-endpoint=unix:///run/cri-dockerd.sock \

--rotate-certificates \

--pod-infra-container-image=registry.aliyuncs.com/google_containers/pause:3.9 \

--v=2

Restart=on-failure

RestartSec=5

[Install]

WantedBy=multi-user.target

EOF

```

###### 9.3.4 同步文件到集群节点

xingdiancloud-native-master-a 上生成的kubelet-bootstrap.kubeconfig,ca.pem同步到node节点

```shell

[root@xingdiancloud-native-master-a k8s-work]# for i in xingdiancloud-native-node-a xingdiancloud-native-node-b xingdiancloud-native-node-c;do scp kubelet-bootstrap.kubeconfig $i:/etc/kubernetes/;done

[root@xingdiancloud-native-master-a k8s-work]# for i in xingdiancloud-native-node-a xingdiancloud-native-node-b xingdiancloud-native-node-c;do scp ca.pem $i:/etc/kubernetes/ssl;done

```

把二进制文件分发到node节点

```shell

[root@xingdiancloud-native-master-a bin]# for i in xingdiancloud-native-node-a xingdiancloud-native-node-b xingdiancloud-native-node-c;do scp kubelet kube-scheduler $i:/usr/local/bin/;done

```

###### 9.3.5 启动服务

注意:

所有worker节点

```

[root@xingdiancloud-native-node-a ~]# systemctl daemon-reload

[root@xingdiancloud-native-node-a ~]# systemctl enable --now kubelet

[root@xingdiancloud-native-node-a ~]# systemctl status kubelet

● kubelet.service - Kubernetes Kubelet

Loaded: loaded (/usr/lib/systemd/system/kubelet.service; enabled; vendor preset: disabled)

Active: active (running) since Fri 2024-01-05 09:21:20 CST; 3min 40s ago

Docs: https://github.com/kubernetes/kubernetes

Main PID: 6177 (kubelet)

CGroup: /system.slice/kubelet.service

└─6177 /usr/local/bin/kubelet --bootstrap-kubeconfig=/etc/kubernetes/kubelet-bootstrap.kubeconfig --cert-dir=/etc/kubernetes/ssl --kubeconfig=/etc/kub...

Jan 05 09:24:11 k8s-node1 kubelet[6177]: E0105 09:24:11.632795 6177 kubelet.go:2855] "Container runtime network not ready" networkReady="NetworkRead...itialized"

Jan 05 09:24:16 k8s-node1 kubelet[6177]: E0105 09:24:16.633986 6177 kubelet.go:2855] "Container runtime network not ready" networkReady="NetworkRead...itialized"

Jan 05 09:24:21 k8s-node1 kubelet[6177]: E0105 09:24:21.713576 6177 kubelet.go:2855] "Container runtime network not ready" networkReady="NetworkRead...itialized"

Jan 05 09:24:26 k8s-node1 kubelet[6177]: E0105 09:24:26.714288 6177 kubelet.go:2855] "Container runtime network not ready" networkReady="NetworkRead...itialized"

Jan 05 09:24:31 k8s-node1 kubelet[6177]: E0105 09:24:31.715295 6177 kubelet.go:2855] "Container runtime network not ready" networkReady="NetworkRead...itialized"

Jan 05 09:24:36 k8s-node1 kubelet[6177]: E0105 09:24:36.717562 6177 kubelet.go:2855] "Container runtime network not ready" networkReady="NetworkRead...itialized"

Jan 05 09:24:41 k8s-node1 kubelet[6177]: E0105 09:24:41.718346 6177 kubelet.go:2855] "Container runtime network not ready" networkReady="NetworkRead...itialized"

Jan 05 09:24:46 k8s-node1 kubelet[6177]: E0105 09:24:46.719040 6177 kubelet.go:2855] "Container runtime network not ready" networkReady="NetworkRead...itialized"

Jan 05 09:24:51 k8s-node1 kubelet[6177]: E0105 09:24:51.721244 6177 kubelet.go:2855] "Container runtime network not ready" networkReady="NetworkRead...itialized"

Jan 05 09:24:56 k8s-node1 kubelet[6177]: E0105 09:24:56.722712 6177 kubelet.go:2855] "Container runtime network not ready" networkReady="NetworkRead...itialized"

Hint: Some lines were ellipsized, use -l to show in full.

```

worker节点都已加入集群,没有在master上安装kubelet,master只作为管理节点所以看不到master节点

```shell

[root@xingdiancloud-native-master-a k8s-work]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

xingdiancloud-native-node-a NotReady 3m59s v1.28.0

xingdiancloud-native-node-b NotReady 43s v1.28.0

xingdiancloud-native-node-c NotReady 43s v1.28.0

```

##### 9.4 部署kube-proxy

###### 9.4.1 创建kube-proxy证书请求文件

注意:

在xingdiancloud-native-master-a上执行

```shell

[root@xingdiancloud-native-master-a k8s-work]# cat > kube-proxy-csr.json << "EOF"

{

"CN": "system:kube-proxy",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "kubemsb",

"OU": "CN"

}

]

}

EOF

```

###### 9.4.2 生成证书

注意:

在xingdiancloud-native-master-a上执行

```shell

[root@xingdiancloud-native-master-a k8s-work]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

[root@xingdiancloud-native-master-a k8s-work]# ls kube-proxy*

kube-proxy.csr kube-proxy-csr.json kube-proxy-key.pem kube-proxy.pem

```

###### 9.4.3 创建kubeconfig文件

```shell

[root@xingdiancloud-native-master-a k8s-work]# kubectl config set-cluster kubernetes --certificate-authority=ca.pem --embed-certs=true --server=https://10.9.12.100:6443 --kubeconfig=kube-proxy.kubeconfig

[root@xingdiancloud-native-master-a k8s-work]# kubectl config set-credentials kube-proxy --client-certificate=kube-proxy.pem --client-key=kube-proxy-key.pem --embed-certs=true --kubeconfig=kube-proxy.kubeconfig

[root@xingdiancloud-native-master-a k8s-work]# kubectl config set-context default --cluster=kubernetes --user=kube-proxy --kubeconfig=kube-proxy.kubeconfig

[root@xingdiancloud-native-master-a k8s-work]# kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

```

###### 9.4.4 创建服务配置文件

注意:

在worker节点上配置

```shell

[root@xingdiancloud-native-node-a ~]# cat > /etc/kubernetes/kube-proxy.yaml << "EOF"

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 10.9.12.66

clientConnection:

kubeconfig: /etc/kubernetes/kube-proxy.kubeconfig

clusterCIDR: 10.244.0.0/16

healthzBindAddress: 10.9.12.66:10256

kind: KubeProxyConfiguration

metricsBindAddress: 10.9.12.66:10249

mode: "ipvs"

EOF

[root@xingdiancloud-native-node-b ~]# cat > /etc/kubernetes/kube-proxy.yaml << "EOF"

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 10.9.12.65

clientConnection:

kubeconfig: /etc/kubernetes/kube-proxy.kubeconfig

clusterCIDR: 10.244.0.0/16

healthzBindAddress: 10.9.12.65:10256

kind: KubeProxyConfiguration

metricsBindAddress: 10.9.12.65:10249

mode: "ipvs"

EOF

[root@xingdiancloud-native-node-c ~]# cat > /etc/kubernetes/kube-proxy.yaml << "EOF"

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 10.9.12.67

clientConnection:

kubeconfig: /etc/kubernetes/kube-proxy.kubeconfig

clusterCIDR: 10.244.0.0/16

healthzBindAddress: 10.9.12.67:10256

kind: KubeProxyConfiguration

metricsBindAddress: 10.9.12.67:10249

mode: "ipvs"

EOF

```

###### 9.4.5 创建服务启动管理文件

注意:

在worker节点上配置

```shell

[root@xingdiancloud-native-node-a ~]# mkdir -p /var/lib/kube-proxy

```

```shell

[root@xingdiancloud-native-node-a ~]# cat > /usr/lib/systemd/system/kube-proxy.service << "EOF"

[Unit]

Description=Kubernetes Kube-Proxy Server

Documentation=https://github.com/kubernetes/kubernetes

After=network.target

[Service]

WorkingDirectory=/var/lib/kube-proxy

ExecStart=/usr/local/bin/kube-proxy \

--config=/etc/kubernetes/kube-proxy.yaml \

--v=2

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

```

###### 9.4.6 同步文件到集群工作节点主机

注意:

在xingdiancloud-native-master-a节点上操作

```shell

[root@xingdiancloud-native-master-a k8s-work]# ls kube-proxy*

kube-proxy.csr kube-proxy-csr.json kube-proxy-key.pem kube-proxy.kubeconfig kube-proxy.pem

[root@xingdiancloud-native-master-a k8s-work]# for i in xingdiancloud-native-node-a xingdiancloud-native-node-b xingdiancloud-native-node-c;do scp kube-proxy.kubeconfig $i:/etc/kubernetes/;done

[root@xingdiancloud-native-master-a k8s-work]# for i in xingdiancloud-native-node-a xingdiancloud-native-node-b xingdiancloud-native-node-c;do scp kube-proxy*pem $i:/etc/kubernetes/ssl; done

```

###### 9.4.7 服务启动

注意:

所有worker节点

```shell

[root@xingdiancloud-native-node-a ~]# systemctl daemon-reload

[root@xingdiancloud-native-node-a ~]# systemctl enable --now kube-proxy

[root@xingdiancloud-native-node-a ~]# systemctl status kube-proxysystemctl status kube-proxy

Unit kube-proxysystemctl.service could not be found.

Unit status.service could not be found.

● kube-proxy.service - Kubernetes Kube-Proxy Server

Loaded: loaded (/usr/lib/systemd/system/kube-proxy.service; enabled; vendor preset: disabled)

Active: active (running) since Fri 2024-01-05 10:53:12 CST; 38s ago

Docs: https://github.com/kubernetes/kubernetes

Main PID: 11727 (kube-proxy)

Tasks: 5

Memory: 17.1M

CGroup: /system.slice/kube-proxy.service

└─11727 /usr/local/bin/kube-proxy --config=/etc/kubernetes/kube-proxy.yaml --v=2

Jan 05 10:53:12 k8s-node1 kube-proxy[11727]: I0105 10:53:12.551675 11727 shared_informer.go:311] Waiting for caches to sync for endpoint slice config

Jan 05 10:53:12 k8s-node1 kube-proxy[11727]: I0105 10:53:12.552316 11727 config.go:315] "Starting node config controller"

Jan 05 10:53:12 k8s-node1 kube-proxy[11727]: I0105 10:53:12.552333 11727 shared_informer.go:311] Waiting for caches to sync for node config

Jan 05 10:53:12 k8s-node1 kube-proxy[11727]: I0105 10:53:12.569095 11727 proxier.go:925] "Not syncing ipvs rules until Services and Endpoints have bee...m master"

Jan 05 10:53:12 k8s-node1 kube-proxy[11727]: I0105 10:53:12.569432 11727 proxier.go:925] "Not syncing ipvs rules until Services and Endpoints have bee...m master"

Jan 05 10:53:12 k8s-node1 kube-proxy[11727]: I0105 10:53:12.652277 11727 shared_informer.go:318] Caches are synced for endpoint slice config

Jan 05 10:53:12 k8s-node1 kube-proxy[11727]: I0105 10:53:12.652318 11727 proxier.go:925] "Not syncing ipvs rules until Services and Endpoints have bee...m master"

Jan 05 10:53:12 k8s-node1 kube-proxy[11727]: I0105 10:53:12.652328 11727 proxier.go:925] "Not syncing ipvs rules until Services and Endpoints have bee...m master"

Jan 05 10:53:12 k8s-node1 kube-proxy[11727]: I0105 10:53:12.652339 11727 shared_informer.go:318] Caches are synced for service config

Jan 05 10:53:12 k8s-node1 kube-proxy[11727]: I0105 10:53:12.652544 11727 shared_informer.go:318] Caches are synced for node config

Hint: Some lines were ellipsized, use -l to show in full.

```

##### 9.5 网络组件部署 Calico

注意:

在calico的官网进行下载对应的yaml文件,在我们master节点上创建

下载地址:https://docs.tigera.io/calico/latest/about

选择calico v3.26版本

```shell

#把对应命令复制过来,不需要执行

kubectl create -f https://raw.githubusercontent.com/projectcalico/calico/v3.26.4/manifests/tigera-operator.yaml

kubectl create -f https://raw.githubusercontent.com/projectcalico/calico/v3.26.4/manifests/custom-resources.yaml

#先使用wget下载后,检查文件正常后在进行部署

[root@xingdiancloud-native-master-a k8s-work]# wget https://raw.githubusercontent.com/projectcalico/calico/v3.26.4/manifests/tigera-operator.yaml

[root@xingdiancloud-native-master-a k8s-work]# wget https://raw.githubusercontent.com/projectcalico/calico/v3.26.4/manifests/custom-resources.yaml

[root@xingdiancloud-native-master-a k8s-work]# ll *yaml

-rw-r--r-- 1 root root 824 Jan 5 13:50 custom-resources.yaml

-rw-r--r-- 1 root root 1475581 Jan 5 13:50 tigera-operator.yaml

```

###### 9.5.1 修改文件

```shell

#custom-resources.yaml文件默认的pod网络为192.168.0.0/16,我们定义的pod网络为10.244.0.0/16,需要修改后再执行

cidr: 192.168.0.0/16 修改成 cidr: 10.244.0.0/16

```

注意:

Docker-Hub在中国大陆访问被隔断

Calico中所有的镜像都需要从Docker-Hub下载

执行之前需要事先准备好所需要的镜像,并导入到各个worker节点

###### 9.5.2 应用文件

```shell

#执行tigera-operator.yaml

[root@xingdiancloud-native-master-a k8s-work]# kubectl create -f tigera-operator.yaml

namespace/tigera-operator created

customresourcedefinition.apiextensions.k8s.io/bgpconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/bgpfilters.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/bgppeers.crd.projectcalico.org created